AI Breakthrough: Robot Walks Freely Using Sim-to-Real Learning

A significant leap in robotics and artificial intelligence has been demonstrated, allowing a humanoid robot to walk and navigate challenging terrains using a technique called “sim-to-real” reinforcement learning. This breakthrough overcomes a long-standing hurdle in AI development: bridging the gap between simulated environments and the unpredictable complexities of the real world.

The Sim-to-Real Challenge

For years, training AI models, especially for robotics, has relied heavily on simulators. These virtual environments offer a controlled space to train complex behaviors, like walking, without the risk of damaging expensive hardware. However, a fundamental problem arises when transferring these trained models to physical robots.

The real world, with its nuanced physics, friction, gravity, and unexpected variables, often behaves differently from its simulated counterpart. This discrepancy, known as being “out of distribution,” can cause AI models to perform poorly or fail entirely.

The core idea behind sim-to-real is to train an AI model in a simulator and then deploy it on a physical robot, expecting it to perform the learned tasks. The success of this method hinges on how closely the simulation mirrors reality and how robust the AI model is to discrepancies. Historically, this transfer has been notoriously difficult, often requiring extensive fine-tuning on the physical robot, which is time-consuming and risky.

MJLab and Reinforcement Learning

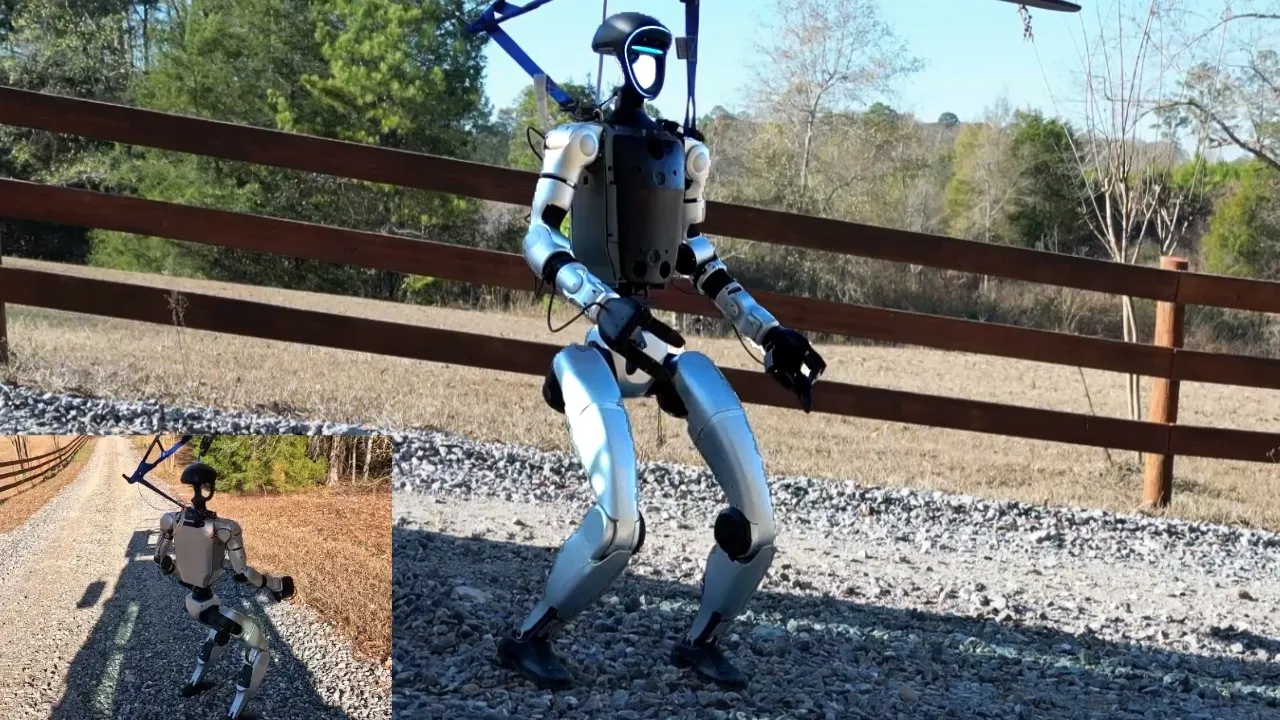

The recent advancement utilizes MJLab, a sophisticated simulator, to train a Unitree G1 humanoid robot. The training process employed reinforcement learning, a type of AI where an agent learns by trial and error, receiving rewards for desired actions and penalties for undesirable ones. The goal was to teach the robot a general-purpose gait that could be controlled and steered.

A key aspect of this success is the improved quality and capabilities of modern robotic actuators. As actuator technology advances, robots become more accurately representable in simulations.

This increased fidelity in simulation lowers the bar for achieving successful sim-to-real transfer. The developer highlights that the closer a robot’s behavior can be replicated in a simulator, the more trivial the sim-to-real process becomes.

Overcoming Simulation-to-Reality Gaps

The developer detailed several critical steps and insights that contributed to the breakthrough:

- Simulator Choice: MJLab was chosen due to its established use in sim-to-real research for Unitree robots. The accessibility of its code and the supportive robotics community, including its author Kevin Zaka, were crucial.

- Sim-to-Sim First: A vital intermediate step was achieving “sim-to-sim” success. This involves ensuring the trained policy works consistently within the simulator under different conditions, using the exact same code pipeline that will be used for the real robot. This helps iron out inconsistencies before moving to the physical world.

- PD Controller Nuances: A significant challenge was the robot’s Proportional-Derivative (PD) controller. The default “implicit” PD controller in MJLab, which relies on privileged simulator data, did not translate well to the real robot. Switching to an “explicit” PD controller, written in Python and PyTorch code, allowed for a more consistent transfer as it could be used identically in both simulation and the real world. While many experts suggest implicit controllers can work, this explicit approach proved critical for this specific project.

- Observation Space Alignment: Another hurdle was the input data used for training. The default velocity-based strategy in MJLab used linear velocity as an input. However, the real Unitree G1 lacks a direct linear velocity sensor. While estimation is possible, the developer found success by ensuring the training inputs more closely matched the real robot’s available sensor data, or by finding ways to bridge this gap.

- Handling Falls: In simulation, episodes typically terminate when a robot falls. This means the AI model never learns from the state of being on the ground. To address this, a “damp” or fallback behavior was implemented for the real robot: if a fall is detected, it goes into a safe, inert state, preventing uncontrolled movements. The long-term goal is to train a policy that can recover from falls.

Real-World Performance

Once the sim-to-real transfer was successful, the Unitree G1, affectionately nicknamed “Jeff,” was put to the test. Equipped with a portable computing setup (a Dell GB10 with 128GB RAM, chosen for its low power draw and compact size), the robot demonstrated remarkable capabilities:

- On-Road and Off-Road Navigation: The robot successfully navigated flat surfaces, slopes, and even thick layers of leaves, conditions that were not explicitly part of its training environment.

- Terrain Adaptation: It showed an ability to balance on uneven terrain, including a foam pit, and handle gravel slopes. While not perfect, its performance was described as impressive, especially considering the complexity of the task and the size of the AI model (reportedly less than 200,000 parameters).

- Controlled Movement: The robot could move forward and backward, with backward movement appearing even more stable. The developer could control its target velocities, and importantly, the robot could stop itself by zeroing out these velocities.

Why This Matters

This achievement has profound implications for the future of robotics and AI:

- Accelerated Development: By making sim-to-real more reliable, the time and cost associated with training robots can be drastically reduced. This opens the door for faster iteration and development of more capable robots.

- Broader Applications: Robots trained with this method could be deployed in a wider range of environments, from hazardous industrial sites and disaster zones to everyday home assistance, performing complex tasks like fetching objects or navigating cluttered spaces.

- Democratization of Robotics: The developer’s emphasis on sharing knowledge and the use of accessible tools like MJLab and LLMs for coding assistance (like Gemini 3 Pro) suggests a path towards making advanced robotics more accessible to researchers and developers.

- AI Robustness: Successfully transferring AI models from simulation to reality demonstrates progress in creating AI systems that are more robust and adaptable to novel situations, a key goal for general artificial intelligence.

Future Outlook

Despite the successes, challenges remain. The robot experienced arm failures during testing, highlighting the physical limitations and durability issues of current hardware.

The developer plans to continue refining the AI model, potentially incorporating visual input and improving its ability to handle more dynamic situations, like recovering from falls. The ultimate goal is to create robots capable of performing complex, real-world tasks, such as assisting in kitchens or tidying up living spaces.

Source: Training a Unitree G1 to Walk w/ Reinforcement Learning (YouTube)