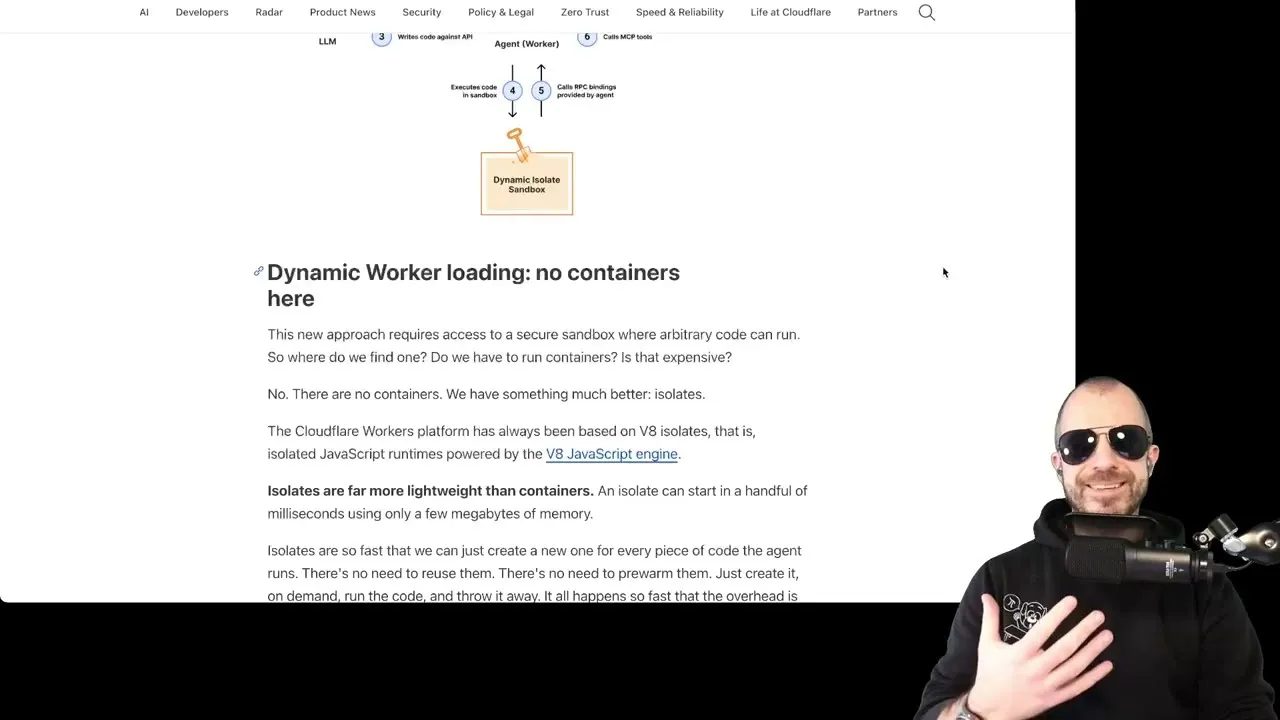

Cloudflare’s Code Mode Boosts LLM Tool Use

Cloudflare is championing a novel approach to integrating Large Language Models (LLMs) with external tools, dubbed ‘Code Mode.’ This method reframes how LLMs interact with functionalities like data retrieval or calculations, moving away from direct conversational tool calls towards generating and executing code that utilizes TypeScript APIs. This shift, detailed in a Cloudflare article, aims to leverage the vast amount of code within LLM training data to enhance their ability to handle complex tasks and a wider array of tools.

The Power of Pre-training

The core innovation of Code Mode lies in its hypothesis about LLM training. While traditional tool calling often involves fine-tuning LLMs on specific, human-curated examples of how to invoke tools and parse their responses (often in JSON format), Code Mode suggests that LLMs possess a more profound, ingrained understanding of programming languages like TypeScript from their initial pre-training phase. This pre-training data likely contains an immense volume of real-world code, including API interactions, making it a more natural fit for LLMs than the often ‘contrived’ examples used in fine-tuning for direct tool calling.

The analogy used to illustrate this point is potent: asking an LLM to perform tool calls directly is akin to asking Shakespeare to write a play in Mandarin after only a month of study. While he might manage, the result would likely be rudimentary and error-prone. In contrast, framing tool calls as TypeScript APIs allows the LLM to operate within its ‘native’ language – code – much like asking Shakespeare to write in English, leading to more sophisticated and reliable outputs.

Addressing Complex Workflows

Cloudflare’s article highlights that Code Mode particularly shines when agents need to string together multiple tool calls. The traditional method requires the output of each tool call to be fed back into the LLM, processed, and then used to formulate the next call. This iterative process can be token-intensive and inefficient.

Code Mode, by allowing the LLM to write a script that orchestrates these calls, bypasses the need for constant LLM intervention. The LLM can generate a single piece of code that executes a series of tool interactions, only requiring the final results to be returned.

This approach promises to streamline complex operations, such as planning an outfit based on location and weather. Instead of the LLM making separate calls for location and then weather, then processing those results, Code Mode could enable the LLM to generate code that directly calls a location service and then uses that data to query a weather service, all within a single execution block. The LLM would then only receive the final weather forecast, saving time and computational resources.

The Nuance of Real-World Messiness

However, the article also raises a critical point of contention regarding the efficiency of Code Mode for highly complex or non-deterministic tasks. While composing API calls into a single code block is appealing, it assumes that the outputs of each tool call are predictable and can be seamlessly fed into the next. In reality, tool outputs can be messy and unpredictable.

For instance, a location service might return a GPS coordinate, a street address, or even prompt the user for clarification if data is ambiguous. Similarly, a weather service might provide a forecast, but external factors or user preferences could necessitate a different approach. In such scenarios, the LLM needs to reason about the intermediate outputs and adapt its plan accordingly.

Simply pre-compiling all tool calls into a single script, as Code Mode might suggest, fails to account for this dynamic decision-making process. This is akin to creating a rigid plan for a complex task – it’s likely to break down when unexpected variables emerge.

The speaker argues that this intermediate reasoning is crucial. Humans naturally adapt their plans based on new information.

If tool calls are rigidly chained, this adaptability is lost, potentially leading to suboptimal or incorrect outcomes. The advantage of Code Mode might diminish in scenarios where the LLM needs to dynamically adjust its strategy based on the results of previous tool executions, rather than following a pre-determined script.

Potential Solutions and Future Directions

Despite this caveat, Code Mode is still viewed as a significant advancement. The article suggests that benefits could still be reaped through techniques like speculative decoding or ‘speculative tool calling.’ This involves generating a sequence of tool calls upfront but also recording all intermediate outputs. The LLM can then review these intermediate results to ensure they are not ‘suspicious’ or unexpected.

If the intermediate steps appear valid, the final result is accepted. If any step looks problematic, the LLM can intervene, allowing for a more dynamic and adaptive execution flow.

This approach could offer speed advantages by pre-executing likely tool sequences while retaining the LLM’s ability to monitor and correct the process. The article also touches upon other advanced features within Cloudflare’s implementation, such as loading code into running agents using isolates, which are areas for further exploration.

MCP: A Standard, Not a Magic Bullet

Finally, the discussion touches upon MCP (Meta-Communication Protocol), which is described not as a revolutionary new capability, but as a standardized way of exposing APIs. While useful for interoperability, it does not inherently add new functionalities. The hype around MCP, the speaker notes, should be tempered with the understanding that it’s a foundational standard for tool exposure.

Cloudflare’s Code Mode represents a promising evolution in LLM-tool interaction, potentially unlocking greater efficiency and capability by aligning with how LLMs are trained. However, the complexities of real-world data and decision-making mean that a purely pre-compiled approach may not always be the optimal solution, necessitating further innovation in adaptive execution.

Source: [Video Response] What Cloudflare's code mode misses about MCP and tool calling (YouTube)