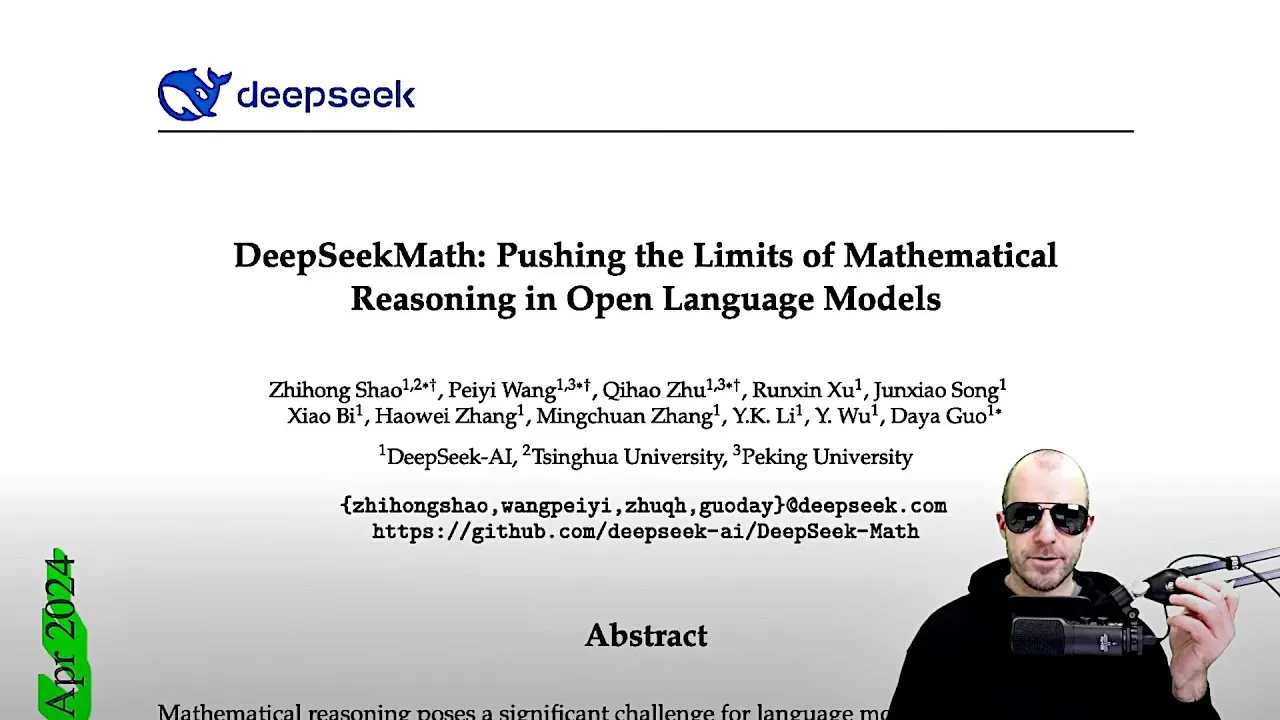

DeepSeekMath Achieves Breakthrough in Open-Source Mathematical Reasoning

The field of artificial intelligence is abuzz with the capabilities demonstrated by DeepSeekMath, an open-source language model that is pushing the boundaries of mathematical reasoning. A recent paper introduces DeepSeekMath 7B, a 7-billion parameter model that has achieved performance on par with, and in some cases exceeding, leading proprietary models like GPT-4 and Gemini Ultra on high school-level mathematics benchmarks. This achievement is particularly significant given that DeepSeekMath is an open-source model, typically constrained by fewer resources than its commercial counterparts.

Revolutionary Data Collection and Model Training

The success of DeepSeekMath can be attributed to a two-pronged approach: an innovative method for collecting a massive, high-quality mathematical dataset and the introduction of a novel reinforcement learning technique called Group Relative Policy Optimization (GRPO).

The DeepSeekMath Corpus: Scaling Data Collection

The researchers meticulously curated the ‘DeepSeekMath Corpus,’ a pre-training dataset boasting an astounding 120 billion math tokens. This corpus was derived from Common Crawl, a vast repository of web data.

The key innovation here lies in their systematic, iterative data collection process. Instead of relying solely on pre-existing, often limited, mathematical datasets, they developed a pipeline to extract relevant and high-quality data at scale.

The process begins with a small ‘seed corpus’ of relevant mathematical texts. A fastText classifier is then trained to distinguish between this seed data and random web pages from Common Crawl.

This classifier is used to sift through billions of web pages, initially retaining the top 40 billion tokens that most closely resemble the seed data. This initial set then becomes the seed for the next iteration.

In subsequent iterations, the process expands by identifying ‘math-related domains.’ By analyzing the URLs and the proportion of classified mathematical content within specific domains (like math-exchange.com), the system re-evaluates entire domains. This iterative refinement, coupled with a manual annotation step to ensure quality and diversity, gradually expands the dataset.

After four iterations, the team had amassed 35.5 million mathematical web pages, totaling 120 billion tokens. The data collection process showed signs of convergence, with nearly 98% of the data collected in the third iteration being retained in the fourth, indicating a stable and comprehensive dataset.

This approach highlights a crucial insight: even within the vastness of the internet, sufficient high-quality mathematical data exists if one possesses the right methods to extract and refine it. This finding has significant implications for domain-specific fine-tuning of LLMs.

Foundation Models and Pre-training Modalities

The DeepSeekMath base model, a 7-billion parameter model, was initialized using the DeepSeek Coder 1.5 base model. The researchers found that pre-training on code proved highly beneficial for mathematical reasoning tasks.

Interestingly, pre-training on datasets like arXiv, while containing mathematical content, showed minimal direct benefit for downstream mathematical problem-solving. This suggests that arXiv papers often focus on communicating mathematical concepts rather than detailing step-by-step derivations, which are crucial for training models to solve problems.

The pre-training mix included their own DeepSeekMath Corpus (56%), alongside other datasets like Algebra Stack Exchange, arXiv, GitHub, and natural language data from Common Crawl in both English and Chinese. This comprehensive pre-training involved processing approximately 500 billion tokens.

Instruction Tuning and Reinforcement Learning

Following pre-training, DeepSeekMath underwent instruction fine-tuning. This involved using datasets like GSM8K, math problems with tool-integrated solutions, and subsets of MathInstruct and LeetCode-100K, focusing on Chain-of-Thought (CoT) and Program-of-Thought prompting techniques. This supervised fine-tuning phase, conducted over roughly 500 steps, significantly boosted the model’s performance, bringing it close to, and in many cases surpassing, open-source competitors and nearing the performance of closed-source giants.

The final stage involved reinforcement learning using GRPO (Group Relative Policy Optimization). GRPO is an advancement over Proximal Policy Optimization (PPO), a common technique in RL. In the context of LLMs, RL aims to optimize the model’s output (action) based on a reward signal, often derived from the correctness of the answer.

Traditional RL methods can be complex, requiring extensive training and often a separate ‘value model’ to estimate future rewards. GRPO simplifies this by removing the need for a value model, freeing up computational resources and memory to focus on the main policy model (the LLM itself).

The GRPO algorithm refines the LLM’s policy—its strategy for generating responses—to maximize the reward signal, which in this case is the accuracy of the mathematical solution. This RL phase proved instrumental in pushing DeepSeekMath’s performance beyond even much larger open-source models and achieving remarkable parity with state-of-the-art closed-source models, performing exceptionally well in both English and Chinese mathematical tasks.

Why This Matters

DeepSeekMath represents a significant leap forward for open-source AI. Its ability to rival top-tier proprietary models in a specialized, complex domain like mathematical reasoning demonstrates the power of strategic data collection and advanced training techniques.

The open-source nature of DeepSeekMath means researchers and developers worldwide can access, build upon, and further innovate this technology. This democratization of advanced AI capabilities can accelerate progress across various scientific and educational fields, potentially leading to more accessible AI-powered tutoring systems, advanced research tools, and innovative problem-solving applications.

The success also validates the hypothesis that high-quality, domain-specific data, even when extracted from broad web crawls, is a critical factor in achieving state-of-the-art performance. The efficiency gains offered by GRPO could pave the way for more resource-efficient training of powerful AI models in the future.

Availability and Future Prospects

DeepSeekMath is available as an open-source model, allowing the AI community to leverage its advanced mathematical reasoning capabilities. The specific benchmarks and detailed methodology are outlined in the research paper, inviting further scrutiny and development from the global AI research community. As open-source models like DeepSeekMath continue to mature, the gap between proprietary and open-source AI is likely to narrow further, fostering a more collaborative and innovative AI ecosystem.

Source: [GRPO Explained] DeepSeekMath: Pushing the Limits of Mathematical Reasoning in Open Language Models (YouTube)