Understand the Basics of Large Language Models (LLMs)

This guide will quickly explain what Large Language Models, or LLMs, are and how they work. You’ll learn the core ideas behind their training and how they generate text. We’ll cover the main steps in a simple way, making complex ideas easy to grasp.

What Are LLMs?

At their heart, LLMs are advanced prediction machines. They are designed to guess the very next word in a sequence. Think of it like a super-powered autocomplete feature on your phone, but much, much more powerful and complex. This ability to predict the next word is the foundation of everything LLMs do, from answering questions to writing stories.

How LLMs Work: Step-by-Step

Massive Training on Data

LLMs start by learning from an enormous amount of text. This training data includes almost everything on the internet: books, articles, computer code, and countless conversations. We’re talking about trillions of words, giving the model a vast understanding of language. This huge dataset is crucial for the model to learn patterns and relationships in human language.

Breaking Down Text into Tokens

The model doesn’t read words like we do. Instead, it breaks down all the text into smaller pieces called tokens. A token can be a whole word, part of a word, or even punctuation. The LLM then converts these tokens into numbers, as computers only understand numerical data. This numerical representation is how the model processes and analyzes language.

Expert Note: Think of tokens like LEGO bricks for language. The LLM uses these bricks, represented by numbers, to build and understand sentences.

Understanding Relationships with Transformer Architecture

LLMs use a special design called the transformer architecture. This allows the model to look at all the tokens in a sentence or piece of text at the same time. Unlike older methods that processed words one by one, transformers can see the whole picture. This helps the model understand how different words relate to each other, even if they are far apart in a sentence. It learns context and meaning more effectively.

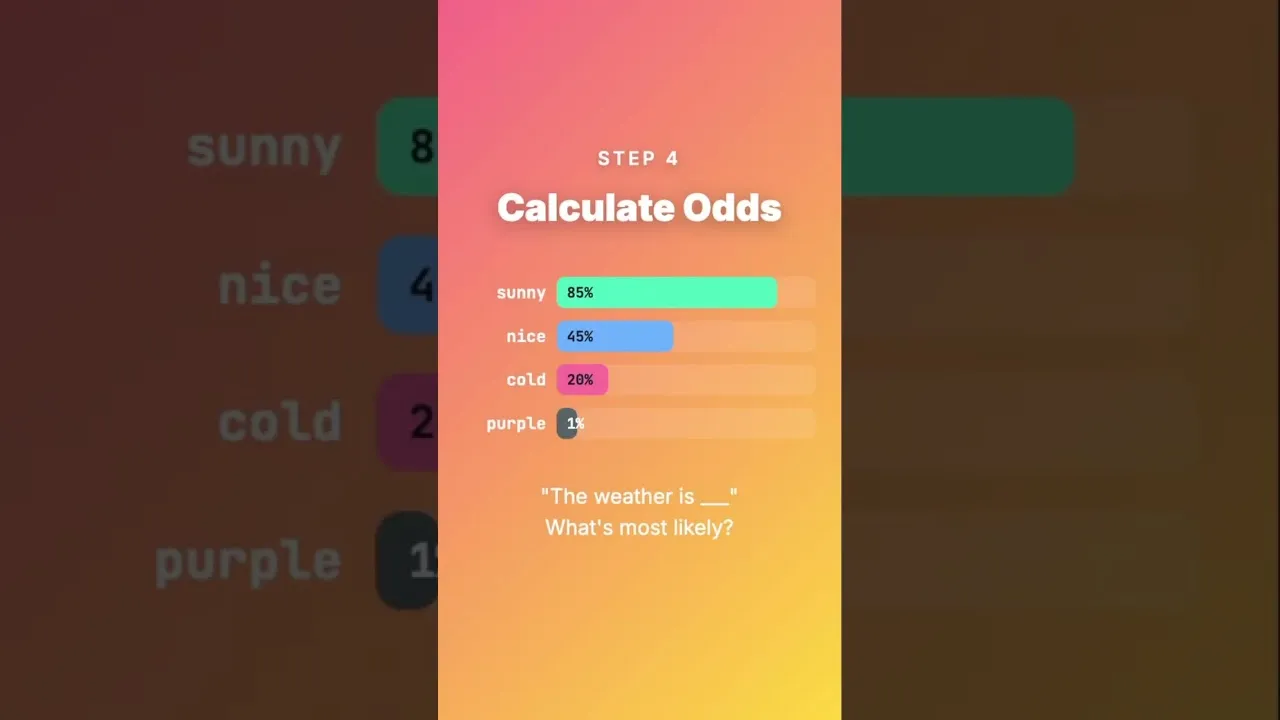

Predicting the Next Word

When you give an LLM a prompt, like a question or the start of a sentence, it gets to work. It calculates the chances, or probabilities, of what the very next word should be. Based on its training and the context of your prompt, it selects the word it thinks is most likely to come next. This word is then added to the prompt, creating a slightly longer text.

Generating Text Token by Token

The process doesn’t stop after one word. The LLM takes the newly formed text (your prompt plus the predicted word) and predicts the next word again. It repeats this process, adding one token at a time, until it has generated a complete response. This step-by-step prediction allows the LLM to construct coherent sentences and paragraphs.

Tip: The quality of the prompt significantly impacts the output. Be clear and specific in your requests to get the best results.

Pattern Matching, Not Thinking

It’s important to remember that LLMs are not actually thinking or understanding in the human sense. They excel at recognizing and matching complex patterns found in the massive amounts of data they were trained on. Their ability to generate human-like text comes from identifying statistical relationships between words and tokens at an incredibly large scale. They are sophisticated pattern-matching machines.

In Summary

You’ve now learned the core concepts of LLMs: training on vast internet data, breaking text into numerical tokens, using transformer architecture to understand context, and predicting the next word one token at a time. It’s all about advanced pattern matching on a massive scale.

Source: Learn the basics of LLMs in 60 seconds with Beau Carnes (YouTube)