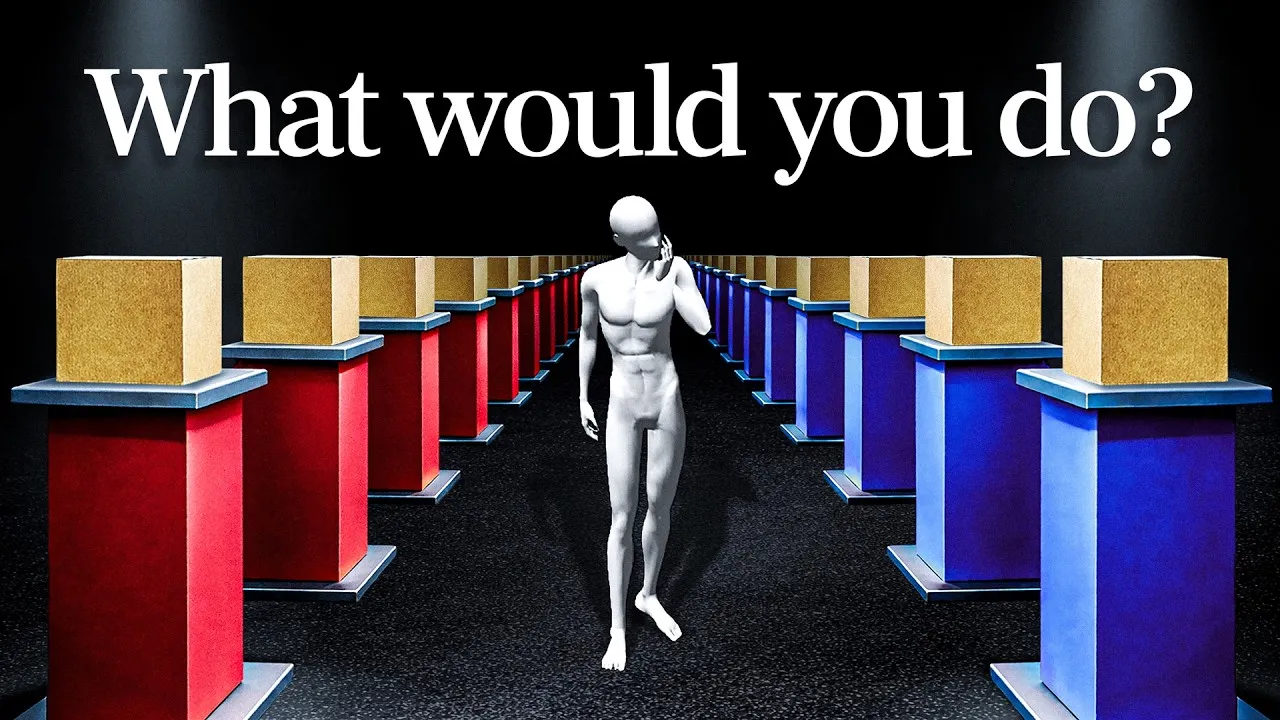

Solve Newcomb’s Paradox: Choose Your Box Wisely

Have you ever faced a choice where two perfectly logical paths lead to completely different, yet equally convincing, outcomes? This is the heart of Newcomb’s Paradox, a mind-bending problem that has puzzled thinkers for decades. In this article, you’ll learn about this paradox, explore the arguments for both sides, and understand the deeper questions it raises about decision-making, rationality, and even free will.

Understanding the Setup

Imagine you walk into a room with a powerful supercomputer and two boxes on a table. One box is open and contains $1,000 in cash. There’s no trick; you know the money is real. The second box is closed, and you can’t see inside. You also know this supercomputer is incredibly good at predicting people’s choices. It has correctly predicted the decisions of thousands of people facing this exact problem before you.

The supercomputer presents you with a choice: you can either take both boxes (the open one with $1,000 and the mystery box) or take only the mystery box. Here’s the catch regarding what’s inside the mystery box:

- If the supercomputer predicted you would take *only the mystery box*, it placed $1 million inside it.

- If the supercomputer predicted you would take *both boxes*, it placed nothing in the mystery box.

The supercomputer made its prediction and set up the boxes *before* you even entered the room. Its only goal is to be accurate. It’s not trying to trick you. The question is: do you take both boxes, or do you take just the mystery box?

The Two Camps: One-Boxers vs. Two-Boxers

This problem divides people almost exactly in half. On one side are the “one-boxers,” who believe they should take only the mystery box. On the other side are the “two-boxers,” who believe they should take both boxes. Both sides feel their choice is obvious.

The One-Boxer Argument: Trust the Prediction

One-boxers believe the supercomputer’s accuracy is the most important factor. They reason that since the computer has been right thousands of times before, it likely predicted their choice correctly. If it predicted they would take only the mystery box, then $1 million is waiting inside. Taking both boxes means they would likely have been predicted to take both, leaving them with only the $1,000 from the open box.

This way of thinking is called “evidential decision theory.” It means you base your decision on the evidence of how accurate the predictor has been in the past. If the computer is, say, 99% accurate, and you choose one box, you have strong evidence that the $1 million is in there. Therefore, choosing one box gives you the best chance of getting $1 million.

Expert Note: This approach focuses on what the choice *reveals* about the predictor’s accuracy. It’s like saying, “My decision tells me something about the past, and therefore about what’s in the box.”

The Two-Boxer Argument: Control Your Own Gain

Two-boxers argue that the boxes are already set. The money is either in the mystery box or it isn’t, based on a prediction made before they even entered the room. Their current choice cannot change what has already happened.

They look at the situation in two possible scenarios:

- Scenario 1: The $1 million is in the mystery box. If you take only the mystery box, you get $1 million. If you take both boxes, you get $1 million plus the $1,000, for a total of $1,001,000. In this case, taking both boxes is better.

- Scenario 2: There is nothing in the mystery box. If you take only the mystery box, you get $0. If you take both boxes, you get $0 plus the $1,000, for a total of $1,000. In this case, taking both boxes is also better.

Since taking both boxes yields a better outcome for you in *either* scenario, it seems like the logical choice. This argument is based on “causal decision theory,” which says you should only consider actions that you can actually cause or influence. Your current choice doesn’t cause the past prediction or the money placement.

Expert Note: This approach focuses on what your action *causes*. It’s like saying, “My decision now can’t affect what’s already happened, so I should choose the option that gives me more money regardless of the past.”

Why It’s a Paradox

The paradox arises because both arguments seem perfectly reasonable, yet they lead to opposite conclusions. If you’re a one-boxer, you trust the predictor and aim for the $1 million. If you’re a two-boxer, you trust that your current actions can only influence the future and aim for the guaranteed $1,000 plus a chance at the million.

Philosophers have debated this for years. Some argue that the rational choice is to take both boxes, even though one-boxers often end up with more money. They suggest the game is “rigged” because the predictor rewards irrationality (choosing one box) with a huge sum.

Deeper Questions Raised

Newcomb’s Paradox isn’t just about choosing boxes; it makes us think about fundamental questions:

- Does free will exist? If a predictor can accurately foresee your choices before you make them, does that mean your choices are predetermined? The paradox highlights the tension between knowing the future and having the freedom to choose.

- What does it mean to be rational? Is rationality about maximizing your gains in the moment (like the two-boxer), or about trusting evidence and acting in a way that has historically led to the best outcomes (like the one-boxer)?

- Is there an ideal way to act? Sometimes, to achieve the best long-term result, you might need to commit to an action that seems worse in the short term. This is similar to how countries maintain peace through the threat of retaliation (Mutually Assured Destruction, or MAD) or how cooperation is beneficial in repeated games like the Prisoner’s Dilemma.

The Takeaway

Ultimately, there’s no single answer that satisfies everyone. The choice you make reveals something about how you view causality, evidence, and your own decision-making power. Whether you choose one box or two, you’re engaging with a deep philosophical problem that has real-world implications for how we make choices, build trust, and understand our own agency in the world.

Source: This Paradox Splits Smart People 50/50 (YouTube)