AI Grants Humanoid Robot “Jeff” New Eyes with VLM

The Unitree G1 humanoid robot, affectionately nicknamed “Jeff,” is taking a significant leap forward in its capabilities, thanks to the integration of advanced Vision Language Models (VLMs). This development allows the robot to not only perceive its environment with unprecedented flexibility but also to understand and interact with objects based on natural language descriptions. This breakthrough marks a pivotal moment in making robots more intuitive and adaptable to human commands.

Object Detection Reimagined with Natural Language

Traditionally, robotic object detection has been limited to a predefined set of objects that the system is explicitly trained to recognize. This often involves cumbersome processes of retraining or fine-tuning models for new items. However, the Unitree G1 is now leveraging a VLM, specifically the Moonream 2 model, to overcome this limitation. Moonream 2, a relatively small model with just under 2 billion parameters and requiring approximately 5GB of memory, is capable of understanding and identifying objects described in natural language. This means users can simply tell the robot what to look for, such as a “black robotic hand” or a “graphics card,” and the VLM will locate them in its field of view.

The system works by using the VLM to pinpoint the XY coordinates of identified objects. A depth camera then extrapolates the Z-axis (depth) information. This data is translated into movement commands for the robot’s arm. The user interface displays the tracked objects, with a red plus sign indicating the robot’s hand and a yellow plus sign marking the target object. The flexibility here is remarkable; instead of a limited list, the robot can now identify virtually anything that can be described, opening up a vast array of potential applications.

During demonstrations, the system successfully identified and tracked objects like a “red bottle of water” and a “yellow bottle of water.” More abstract descriptions also proved effective, with the robot identifying a “sink” and a “microwave” even when prompted with phrases like “device to heat food.” This capability moves beyond simple object recognition to a more nuanced understanding of an object’s function or characteristics.

Addressing Camera Placement Challenges

A key challenge highlighted in the development is the current camera placement on the robot’s head. When the head is tilted downwards to observe the robot’s own hands, it becomes difficult to see objects on higher surfaces like kitchen countertops. Conversely, tilting the head upwards to see countertops obscures the view of the hands. This limitation suggests a need for multiple cameras or a re-evaluation of the primary sensor’s position. The ideal scenario might involve cameras closer to the grippers or on the robot’s torso to provide a more comprehensive view for manipulation tasks.

The Dexterous Hands Dilemma

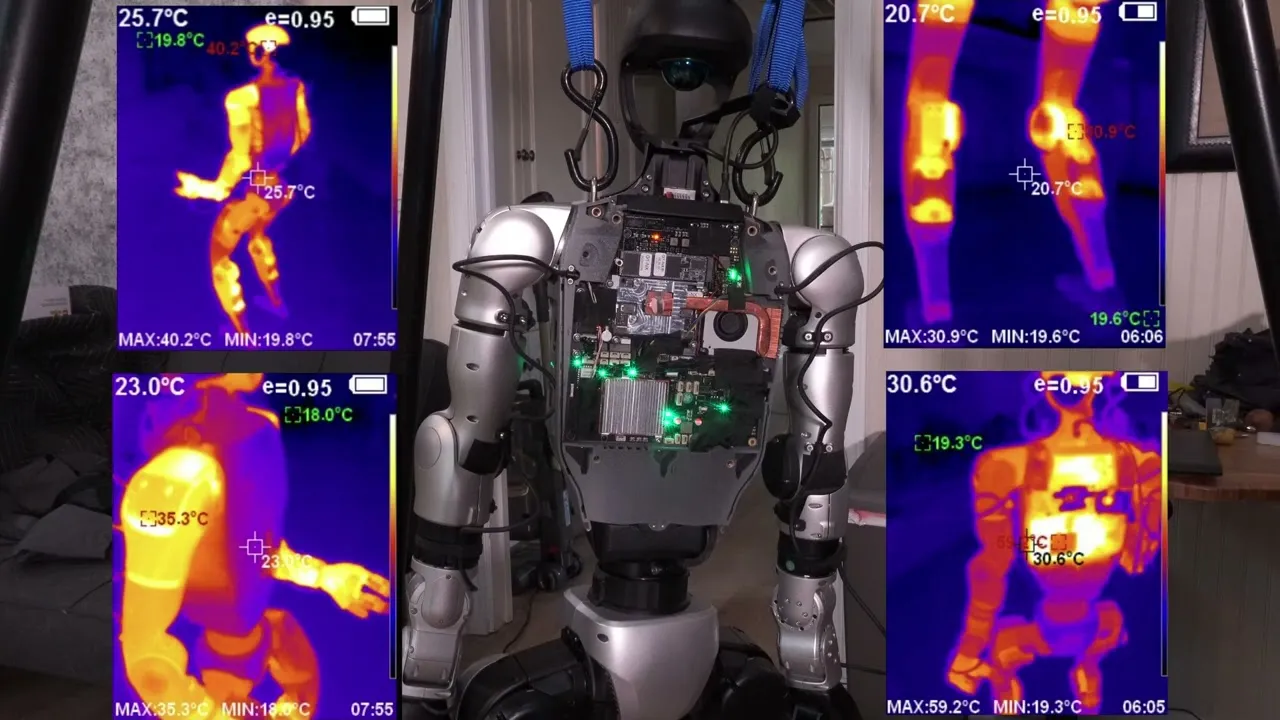

The development also encountered a significant hardware issue: a malfunctioning right hand on the Unitree G1. Initial attempts to program grab functionality were unsuccessful, leading to a debugging process that revealed the right hand was unresponsive. While the left hand worked perfectly, the right hand’s side-specific nature and the lack of necessary adapter boards prevented the use of alternative hands, such as those from the Inspire series. Internal inspection, including thermal imaging, confirmed the right hand was not receiving power, effectively rendering it “dead.” This incident offered a rare glimpse into the robot’s internal architecture, which, interestingly, resembles that of a compact, high-performance laptop, utilizing similar thermal management strategies.

Vision Language Models: The Future of Robotic Understanding

The Moonream 2 VLM, with its ~2 billion parameters, is a powerful yet compact AI model. It can perform various tasks, including generating short or detailed captions, answering questions, and, crucially for this application, performing object detection and pointing. The model’s speed is also impressive, with point detection queries averaging around 140-150 milliseconds, a significant improvement over older methods that required retraining for new objects.

The VLM’s ability to interpret abstract descriptions is a game-changer. For instance, identifying a “thing to heat up food” instead of just “microwave” demonstrates a level of contextual understanding previously unseen in such compact robotic systems. This opens the door for robots to perform more complex tasks that require a deeper comprehension of their environment and the objects within it.

SLAM and Occupancy Grids: Adapting to New Perspectives

Another area of exploration involved adapting the robot’s Simultaneous Localization and Mapping (SLAM) system, particularly its LiDAR-based occupancy grid, to work with the head tilted backward. While the LiDAR sensor continued to function, the altered camera perspective initially caused disorientation in the robot’s understanding of its orientation and the environment. By manually setting an environment variable to account for the head’s tilt angle (e.g., 25 degrees), the system could be recalibrated to generate a more accurate occupancy grid. This demonstrates the potential for fine-tuning sensor data based on known configurations, though slight inaccuracies suggest ongoing challenges with precise angle calibration and dynamic head movements.

Path Planning and Arm Control: The Next Frontier

Improving the robot’s arm control and manipulation capabilities is a primary objective. While inverse kinematics (IK) is a potential solution for smoother arm movements, challenges remain. A significant issue is the discrepancy between perceived depth from the head-mounted camera and the actual depth relative to the robot’s hand. This requires sophisticated calculations to ensure accurate grasping. Furthermore, the robot currently lacks path planning capabilities, meaning it might attempt to move through obstacles or collide with objects.

The path forward likely involves a combination of environmental understanding, path planning algorithms, and an improved arm policy. While simulators can aid in training complex behaviors like gait or manipulation, bridging the gap between simulation and real-world performance, especially with sensor data, remains a difficult task. The current approach emphasizes leveraging real-world data and developing solutions that work directly on the robot, with simulation as a potential future tool.

Why This Matters

The integration of VLMs like Moonream 2 into robots like the Unitree G1 signifies a major step towards more intuitive and versatile humanoid robots. The ability to understand and act upon natural language commands dramatically lowers the barrier to entry for human-robot interaction. This could lead to robots that are more helpful in domestic settings, assisting with chores, or in industrial environments, performing tasks that require adaptability and on-the-fly decision-making. The ongoing work on camera placement, arm control, and path planning, while facing challenges, is crucial for unlocking the full potential of these advanced AI systems in real-world applications.

Source: A bigger brain for the Unitree G1- Dev w/ G1 Humanoid P.4 (YouTube)