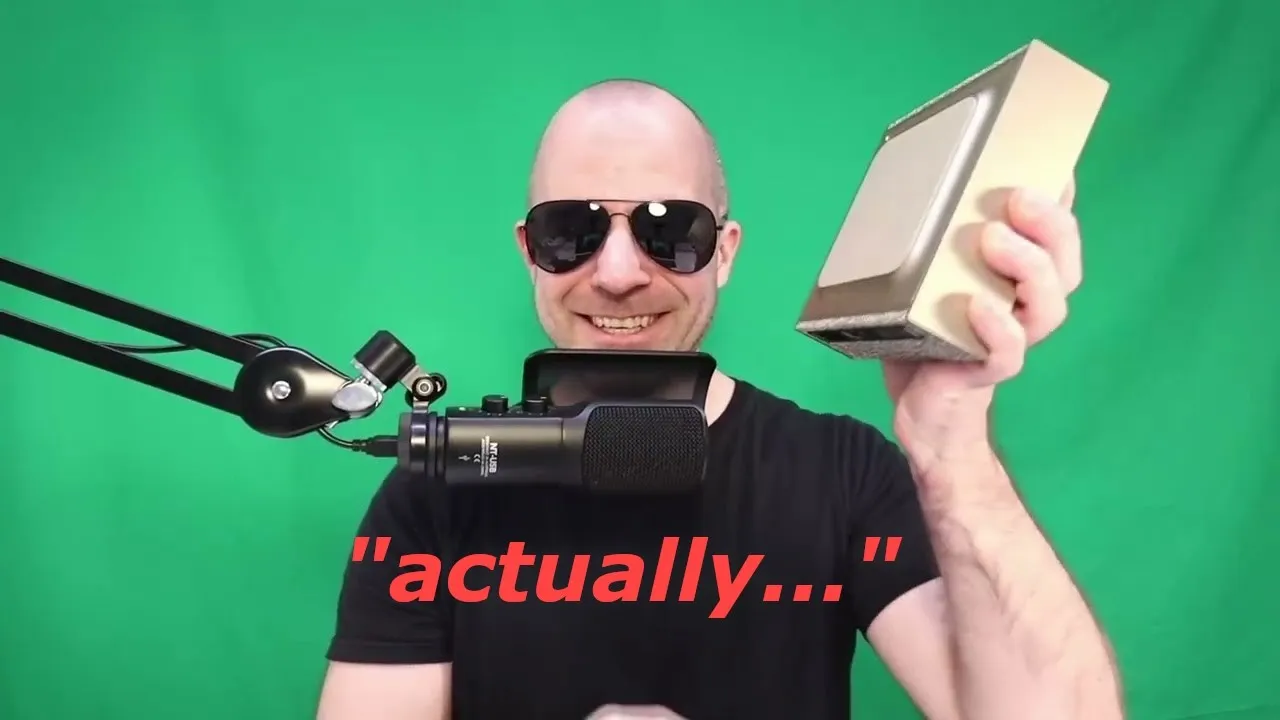

AI Mansplainer Runs Locally on Portable Nvidia DGX Spark

In a demonstration of cutting-edge AI portability and capability, a fully automated ‘mansplainer’ has been developed, capable of running entirely on a compact, backpack-sized device. This innovative project showcases the power of the Nvidia DGX Spark, a portable AI supercomputer, to host and chain multiple AI models for real-time conversational analysis and response.

The ‘Mansplainer’ Concept

The core idea behind the project is to create an AI that can detect subtle inaccuracies or vagueness in conversations and offer precise, often pedantic, corrections. The system, demonstrated by its creator, listens to spoken input, processes it, and then delivers a corrective or explanatory response in a synthesized voice. Examples included correcting misconceptions about plastic recycling, the botanical classification of strawberries, and the origins of Sriracha sauce, all delivered with a characteristic, slightly condescending tone.

“You see how powerful this is? Now, we can be everywhere at once. Wherever someone is slightly wrong about something, we can be there to correct them,” the creator explained, highlighting the potential for such a system to be deployed in various scenarios.

Under the Hood: AI Models and the DGX Spark

The ‘mansplainer’ is built by chaining three distinct AI models:

- Whisper Model: This model handles the initial audio processing, transcribing spoken words into text.

- Mistral Medium: This large language model (LLM) takes the transcribed text and generates the ‘mansplained’ content, formulating the corrective or explanatory response.

- Vibe Voice: Developed by Microsoft, this model synthesizes the generated text into speech, providing the AI’s voice output. The creator noted that the somewhat annoying German accent used in the demonstration was simply the default voice available in Vibe Voice, which they found fitting for the project’s persona.

Crucially, all three of these models are capable of running simultaneously on the Nvidia DGX Spark. This compact unit boasts an impressive 120GB of unified RAM, which can function as both system RAM and GPU VRAM. This provides a substantial amount of memory for AI tasks, exceeding that of some high-end professional GPUs like the H100, enabling the execution of large and complex AI models.

Nvidia DGX Spark: Power and Portability

The Nvidia DGX Spark is presented as a significant step forward in making powerful AI hardware accessible and portable. It’s designed to be a self-contained AI workstation that can fit into a backpack. Beyond its raw processing power, Nvidia has focused on ease of use and developer experience.

Upon initial setup, the DGX Spark connects to a network, obtains an IP address, and provides a hostname, making it easy to SSH into the device. It runs a standard Linux distribution (Ubuntu) and features an integrated Nvidia GB10 GPU with unified memory architecture. This unified memory approach allows for flexible allocation between the CPU and GPU, which is particularly beneficial for running large models or complex workflows.

Nvidia also provides the AI Workbench, a tool designed to simplify the management of AI projects. It facilitates the creation and management of containerized environments, allowing users to easily set up specific versions of CUDA, Python, and deep learning frameworks like PyTorch for different projects. This ensures compatibility and avoids conflicts when working with multiple AI models or applications.

Furthermore, Nvidia offers a suite of ‘playbooks’ and tutorials on their website, guiding users through various AI tasks. These range from setting up local LLM inference (like GPT-20B) and building enterprise applications to fine-tuning models and deploying visual recognition systems. The creator demonstrated the ease of setting up a local visual language model (VLM) web UI, which took approximately two minutes to get running.

Why This Matters: Privacy, Tinkering, and Accessibility

The development of the ‘mansplainer’ on the DGX Spark highlights several key advancements in AI:

- Enhanced Privacy and Autonomy: The ability to run large AI models locally on a device like the DGX Spark means users can leverage powerful AI capabilities without sending sensitive data to the cloud. This is crucial for individuals and organizations concerned about data privacy and security. The creator noted that one or two DGX Sparks could potentially run the world’s largest open-weight models locally.

- Democratization of AI Development: Portable and user-friendly hardware like the DGX Spark lowers the barrier to entry for AI experimentation. Developers, researchers, and hobbyists can now fine-tune models, experiment with new architectures, and deeply customize AI systems in ways that are difficult or impossible when relying solely on cloud APIs or rigid cloud deployments.

- Real-time, Localized AI: The project demonstrates that complex AI tasks, such as real-time audio transcription, natural language understanding, text generation, and speech synthesis, can be performed locally and with relatively low latency, making conversational AI applications more responsive and versatile.

- New Form Factors for AI: The backpack-sized nature of the DGX Spark signifies a shift towards more integrated and mobile AI solutions, moving beyond large server racks or desktop workstations.

Availability and a Special Offer

The Nvidia DGX Spark is positioned for developers and researchers who value privacy and enjoy hands-on AI experimentation. While pricing details for the DGX Spark were not explicitly stated in the demonstration, the creator mentioned that Nvidia is hosting a raffle for one DGX Spark unit.

To enter the raffle, participants must register for Nvidia’s GTC (GPU Technology Conference) and attend a session other than the keynote. This offer is specifically for individuals in the EMEA (Europe, Middle East, and Africa) region. Further details and registration links are available via a link provided by the creator.

The ‘mansplainer’ project, while perhaps whimsical, serves as a powerful proof-of-concept for the practical applications of advanced AI running on accessible, portable hardware, opening doors for a new era of localized and personalized AI experiences.

Source: I BUILT A FULLY AUTOMATIC MANSPLAINER (YouTube)