DeepMind AI Reconstructs Moving Scenes in 4D

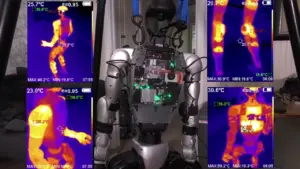

Google DeepMind has unveiled a groundbreaking AI capable of reconstructing entire scenes in four dimensions – three spatial dimensions plus time – with unprecedented speed and accuracy. This new technology, dubbed D4RT (Dynamic 4D Radiance Fields), can capture and represent the intricate movements within a scene, a feat previously requiring complex, multi-model systems and significant computational overhead.

From Static to Dynamic Reconstruction

Traditionally, creating 3D representations of scenes involved capturing static environments. However, the real world is dynamic. Objects move, people interact, and scenes evolve over time. Reconstructing these dynamic scenes, especially capturing how objects move and change position, has been a significant challenge in computer vision and AI.

Previous approaches often relied on stitching together multiple specialized AI models. One model might handle depth estimation, another motion tracking, and a third camera pose. This patchwork of AI components often resulted in an “abomination,” as described by researchers, requiring a process called test-time optimization. This optimization phase could take minutes, with the AI laboriously trying to make the disparate models agree to prevent geometric inconsistencies.

Introducing D4RT: A Unified Approach

D4RT, pronounced “dart,” revolutionizes this process by employing a single, unified AI architecture – a transformer model. This single model is capable of simultaneously understanding and processing depth, motion, and camera pose without the need for separate components or complex integration. This unified approach drastically simplifies the reconstruction pipeline and eliminates the need for time-consuming optimization loops.

One of the most impressive capabilities of D4RT is its ability to handle occlusion. This means the AI can infer the position and trajectory of objects even when they are temporarily hidden from view, such as when one object passes in front of another. It achieves this by leveraging its understanding of the object’s past movements and predicting its future position based on the overall scene dynamics observed throughout the video.

Unprecedented Speed and Efficiency

The performance leap offered by D4RT is staggering. Researchers report that it can be up to 300 times faster than previous methods for 4D reconstruction. This dramatic speed increase is attributed to its single-model architecture and its ability to process information in a highly parallelizable manner, akin to having numerous independent “elves” working on different parts of the scene simultaneously without constant communication overhead.

The output of D4RT is a point cloud representation of the scene. While this format offers incredible flexibility for dynamic scenes, it comes with certain limitations. Unlike 3D meshes, point clouds are not directly suitable for tasks like 3D printing or physics simulations without an additional meshing step. Furthermore, D4RT prioritizes geometric accuracy over photorealism, meaning it may not produce the visually stunning, reflective surfaces often seen in methods like Gaussian Splats or traditional 3D meshes.

How D4RT Works: A Glimpse Under the Hood

At its core, D4RT utilizes an encoder-decoder architecture. The encoder acts as a “master carpenter,” analyzing the input video to build a global understanding of the scene’s past and present. The decoder, comprised of “magic elves,” then constructs the 4D representation. Instead of trying to build the entire scene at once, each elf is queried for specific points at specific times. This decentralized approach allows for immense scalability and speed.

A clever enhancement involves feeding the original high-resolution video pixels back into the decoder. This allows the “elves” to reconstruct details finer than their internal representation, effectively giving them “magic glasses” to see with greater clarity. This technique is crucial for achieving high-fidelity reconstruction even in complex, fast-moving scenes.

Why This Matters

The implications of D4RT are vast. Its ability to capture dynamic scenes in four dimensions opens doors for advancements in:

- Virtual and Augmented Reality: Creating more immersive and realistic virtual environments that accurately reflect real-world motion.

- Robotics and Autonomous Systems: Enhancing robots’ understanding of dynamic environments for better navigation and interaction.

- Film and Animation: Streamlining the process of capturing complex motion for visual effects and animated content.

- Sports Analysis: Providing detailed 4D reconstructions of athletic performances for training and analysis.

- Digital Twins: Building more accurate and dynamic digital replicas of physical systems and environments.

This research is a collaborative effort between Google DeepMind, University College London, and the University of Oxford, highlighting the power of open academic and industry collaboration in pushing the boundaries of AI. The release of such powerful tools, often freely available, signals a new era of accessibility in advanced AI research.

Source: DeepMind’s New AI Tracks Objects Faster Than Your Brain (YouTube)