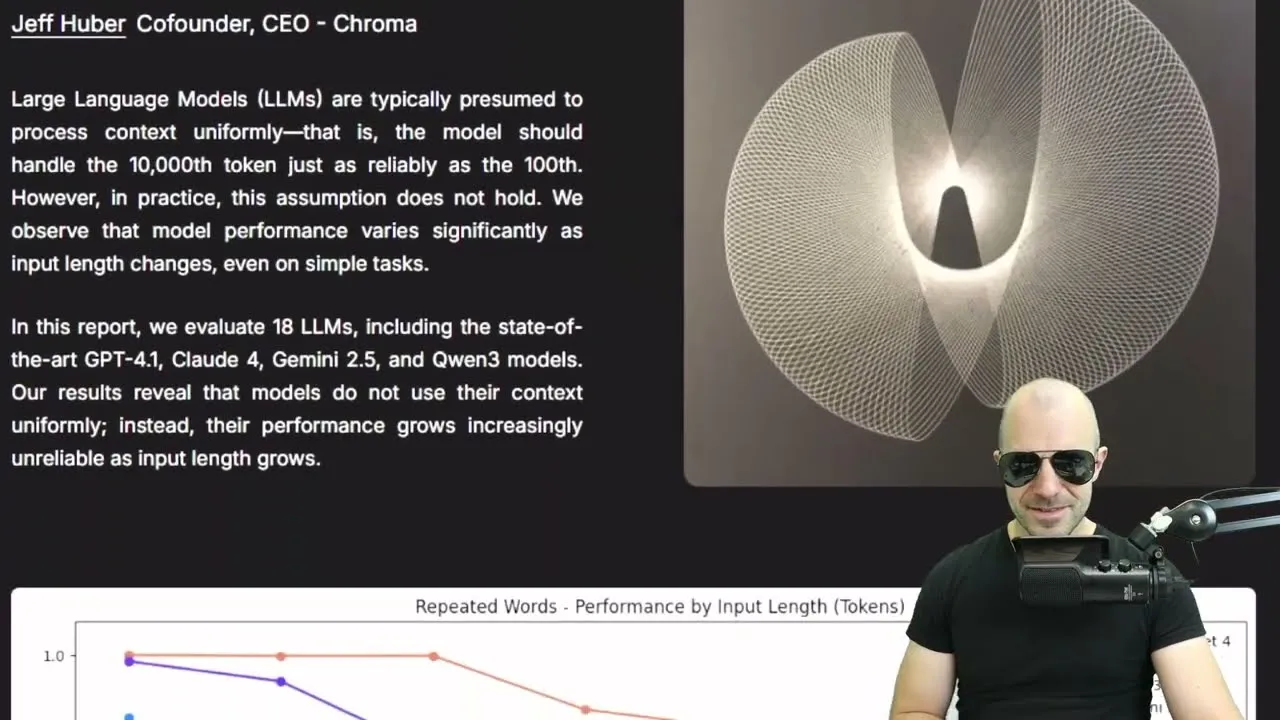

Long Context LLMs Struggle as Input Grows, Study Finds

While large language models (LLMs) boast ever-increasing context windows, a new paper from researchers at Chroma reveals that their performance significantly degrades as the input length grows, especially on more complex tasks. This challenges the notion that simply stuffing more information into an LLM’s context is always the best approach.

Context Rot: The Hidden Downside of Long Context Windows

The proliferation of LLMs with massive context windows, some reaching hundreds of thousands of tokens, has led to a common practice of “context engineering” – essentially, feeding models large amounts of information to inform their responses. Companies like Anthropic (Claude), Google (Gemini), and OpenAI (GPT) have all pushed the boundaries of context length.

Traditionally, LLM providers have validated these long-context capabilities using “needle in a haystack” evaluations. This involves embedding a specific piece of information (the “needle”) within a large body of text (the “haystack”) and then asking the model a question that requires retrieving that specific fact. While models often perform well on these tests, the Chroma paper argues that these evaluations are too simplistic.

The core issue, termed “context rot” by the researchers, is that performance doesn’t scale linearly with context length. As the input grows and tasks become even slightly more complex, LLMs begin to struggle. This degradation is not uniform across all models, with some holding out longer than others, but a downward trend in performance is consistently observed.

Beyond Simple Retrieval: New Challenges for LLMs

The Chroma paper introduces variations on the “needle in a haystack” task to better reflect real-world complexities. These variations include:

- Needle-Question Similarity: Evaluating how well models perform when the question and the target information have varying degrees of lexical or embedding similarity. Performance drops significantly when the question doesn’t closely match the wording of the answer.

- Distractors: Introducing pieces of text that are lexically similar to the target information but are incorrect answers. The presence of these distractors, especially multiple ones, significantly confuses LLMs and degrades performance, increasing the likelihood of hallucinations. This mirrors real-world scenarios where data is often noisy and contains misleading information.

- Haystack Structure: Comparing performance on coherent text versus shuffled, incoherent text. Surprisingly, models sometimes perform better on shuffled text, suggesting that coherent text might engage more complex processing that distracts from simple retrieval.

The researchers tested models from the Claude, GPT, and Gemini families, as well as open-source models like those from the Quwen family. Across these models, the trend remained: increasing input length and task complexity led to performance degradation.

The Long Memory Evaluation (Long-MEval) Benchmark

A significant part of the study involved re-evaluating the Long-MEval benchmark, which is designed to test LLMs’ ability to retain and utilize information over extended interactions. Chroma researchers focused on three subtasks: knowledge updates, temporal reasoning, and multi-session conversations.

The study compared two conditions:

- Full Context: The entire conversation or collection of conversations, averaging around 113,000 tokens, was fed to the model, often containing irrelevant information and distractors.

- Focused Context: Only the specific, relevant pieces of information needed to answer the question were provided to the model.

The results were stark. The focused context condition consistently and significantly outperformed the full context condition, even though the underlying task and the relevant information remained the same. This indicates that models struggle to sift through vast amounts of information, even when it fits within their context window. Simply providing more context does not equate to better understanding or retrieval if that context is not carefully curated.

Why This Matters: Smarter Context Engineering is Key

The findings have critical implications for how we use and develop LLMs:

- Beyond Raw Context Length: The sheer size of a context window is not the sole determinant of an LLM’s effectiveness. The quality and structure of the information within that window are paramount.

- The Value of Retrieval: The research strongly supports the utility of retrieval-augmented generation (RAG) and similar techniques. Systems that can intelligently identify and retrieve only the most relevant information before passing it to the LLM will likely achieve superior performance compared to simply dumping all available data into the prompt.

- Rethinking Evaluation: Current benchmarks, like the basic “needle in a haystack” tests, may not adequately capture the real-world limitations of long-context LLMs. More rigorous evaluations that incorporate task complexity and realistic data noise are needed.

- Developer Implications: For developers building LLM-powered applications, this highlights the importance of investing in sophisticated context engineering. Rather than relying solely on larger context windows, focus on methods to distill and present information effectively to the model.

While the Chroma paper’s findings are particularly beneficial for companies like Chroma, which specialize in retrieval engines and vector databases, the underlying message is broadly applicable. The era of simply maximizing token count may be giving way to an era where intelligent information management is the true key to unlocking the full potential of large language models.

Source: Context Rot: How Increasing Input Tokens Impacts LLM Performance (Paper Analysis) (YouTube)