NVIDIA AI Reconstructs Reality From Photos

NVIDIA has unveiled a groundbreaking AI technique that can reconstruct realistic 3D scenes from a collection of 2D images, overcoming a significant hurdle that has plagued previous methods. This new approach, dubbed PPISP (Photo-Realistic Photorealistic Image Synthesis Pipeline), promises to enhance applications ranging from virtual world creation and movie production to autonomous vehicle training.

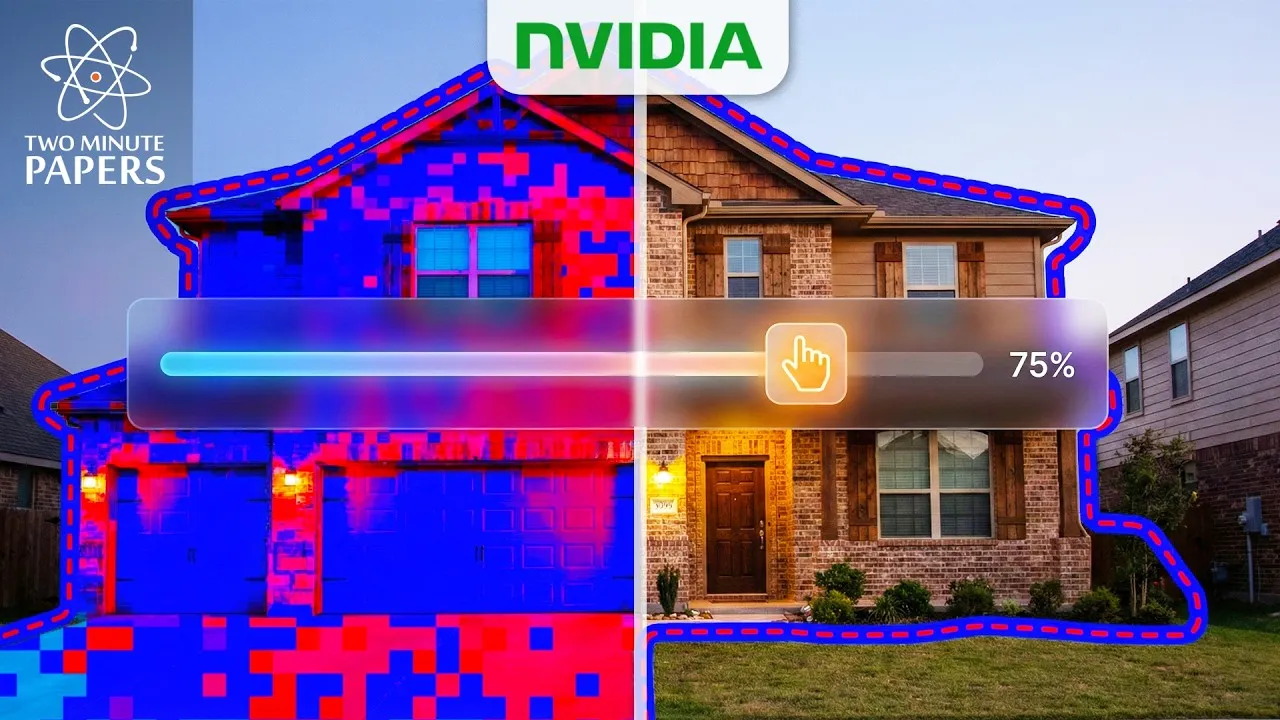

For years, researchers have explored techniques like Neural Radiance Fields (NeRF) to generate novel views of a scene from a set of input images. While NeRF and its successors can create impressive results, they often struggle with visual artifacts such as ‘floaters’ – ghostly, semi-transparent objects that appear in the reconstructed 3D models. These artifacts arise from inconsistencies in lighting and camera parameters across the input images.

The Problem: Inconsistent Lighting and Camera Settings

Imagine trying to photograph a house. Each photo you take might be at a different time of day, under varying light conditions, and from different angles. Furthermore, automatic camera settings like exposure and white balance adjust based on the ambient light, leading to drastic color and brightness shifts between frames. Previous 3D reconstruction algorithms, when faced with these variations, would incorrectly interpret these lighting changes as actual changes in the object’s color or geometry, leading to the problematic ‘floaters’ and blurry, unrealistic models.

This is akin to trying to understand a house’s true color when you’re wearing different colored sunglasses each time you look at it. The sunglasses (camera settings) distort the reality of the house (the scene). The AI would mistakenly believe the house itself was changing color, not that the viewing conditions were different.

NVIDIA’s PPISP: The Master Detective AI

NVIDIA’s PPISP tackles this challenge by acting like a ‘master detective.’ Instead of focusing solely on the scene itself, it analyzes the ‘sunglasses’ – the camera’s parameters for each image. It intelligently identifies and accounts for variations in exposure, white balance, and even lens imperfections like vignetting (darkening at the edges of the image) and the camera’s response curve (how digital sensors distort light).

“It actually understands what the house looks like,” the researchers explain, highlighting how the AI surgically removes the effects of these ‘sunglasses’ to reveal the true color and form of the scene. This is achieved by solving for a ‘color correction matrix’ for each image, which essentially describes how the camera altered the colors. By reverting these changes, PPISP reconstructs a more accurate and photorealistic representation of reality.

How PPISP Works: Deconstructing the Image

The PPISP process involves several key steps:

- Exposure Offset: The AI first determines the overall brightness of the scene captured in each image.

- White Balance Correction: It then identifies and removes color casts introduced by lighting conditions, effectively taking off the ‘colored sunglasses.’

- Vignetting Correction: PPISP learns the natural darkening effect at the edges of images caused by real camera lenses, correcting for this imperfection.

- Camera Response Curve Flattening: Finally, it accounts for the non-linear way digital sensors capture light, flattening the curve to represent light more accurately.

By solving these four ‘puzzles’ separately, PPISP doesn’t just create a visually appealing image; it mathematically reconstructs the underlying reality that the camera’s imperfect lens and settings obscured. The researchers noted that the controller developed for fixing exposure in new views functions remarkably similarly to the auto-exposure systems found in modern smartphones, suggesting an AI-driven reinvention of digital camera ‘brains.’

Why This Matters: Towards True Digital Realism

The ability to create high-fidelity 3D reconstructions from 2D images has profound implications:

- Virtual Environments: Developers can create more realistic and immersive virtual worlds for gaming, training simulations, and metaverse applications.

- Film and VFX: Filmmakers can generate complex scenes and visual effects with unprecedented realism, reducing the cost and complexity of traditional methods.

- Autonomous Vehicles: Training self-driving cars in highly realistic virtual environments allows for safer and more comprehensive testing of driving scenarios.

- Digital Archiving: Preserving historical sites or objects in detailed 3D models.

Furthermore, the underlying principle of separating factual data from observational biases – akin to separating the house’s true color from the effect of the sunglasses – offers a compelling analogy for critical thinking and self-awareness in everyday life.

Limitations and Future Work

Despite its impressive capabilities, PPISP is not without its limitations. The current method assumes cameras follow strict global rules for image processing. However, modern smartphone cameras often employ ‘local tone mapping,’ which selectively brightens or darkens specific areas (like a face or a window). These localized adjustments can confuse the PPISP AI, as they deviate from the global physical equations it relies on.

NVIDIA’s research team has made this advanced technique publicly available, a move lauded as a significant gift to the AI and computer vision communities. This work represents a substantial leap forward in computational photography, bringing us closer to a future where digital reconstructions are indistinguishable from reality.

Source: NVIDIA’s Insane AI Found The Math Of Reality (YouTube)