Nvidia Jetson Thor: A Robotics AI Powerhouse Emerges

Nvidia has unveiled the Jetson Thor developer kit, a compact yet potent AI computing platform targeting the demanding needs of robotics. The standout feature is its massive 128 gigabytes of video memory, a specification that immediately grabbed attention, especially considering its $3,000 price point. This colossal memory capacity, coupled with a respectable 273 gigabytes per second of memory bandwidth, positions the Jetson Thor as a significant advancement for onboard AI processing in robots.

Understanding the Specs: Memory and Bandwidth

While 128GB of VRAM is the headline grabber, the memory bandwidth of 273 GB/s is crucial for understanding the device’s performance profile. For context, this bandwidth is considerably lower than high-end consumer GPUs like the RTX 5090 (around 1790 GB/s), but it’s exceptionally high when compared to CPU and RAM configurations, often requiring dual-server-grade CPUs to achieve similar speeds. This unique balance suggests the Jetson Thor is not designed for heavy model training but is optimized for efficient AI inference directly on robotic platforms.

Power Efficiency and Compact Design

A key advantage for robotics is the Jetson Thor’s remarkably low power consumption. The developer kit draws a maximum of 130 watts, typically powered via USB-C. This low power draw is critical for battery-operated robots, significantly extending operational time compared to more power-hungry solutions. The actual computing unit is a small, embeddable board, likely the T5000, with the bulk of the dev kit chassis serving as a heatsink and fan for thermal management. This modular design allows for integration into various robotic form factors.

Local LLMs and VLMs on the Edge

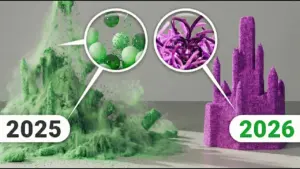

The Jetson Thor opens up new possibilities for running large language models (LLMs) and vision-language models (VLMs) directly on a robot, eliminating the need for constant cloud connectivity. The device can host sophisticated models like the 30-billion parameter Quen 3 coder model. While inference speed for this model clocked in at around 6.8 tokens per second, which is on the slower side for complex coding tasks, it’s sufficient for many applications and significantly faster than real-time speech processing.

The real power for robotics lies in its VLM capabilities. Models like Moon Dream 2, capable of object detection, visual querying, and pointing with high accuracy, can run on the Jetson Thor. The VLM can identify and locate objects with impressive flexibility, going beyond pre-defined object categories to understand descriptions like “the thing on the floor that should be picked up.” This allows robots to interact with their environment in more nuanced and intelligent ways.

Pipeline Parallelism for Enhanced VLM Performance

Addressing the memory bandwidth limitation, the Jetson Thor excels when employing pipeline parallelism. By running multiple instances of a VLM, such as Moon Dream 2, across the available memory, developers can effectively double or even triple the frame rate for visual tasks. For example, running 15 instances of Moon Dream 2, each processing different frames, can achieve up to 30 frames per second with only a minor increase in latency per instance. This technique leverages the abundant memory to overcome the bandwidth bottleneck, enabling smoother and more responsive visual processing for robots.

Why This Matters: Real-World Impact

The Jetson Thor dev kit is poised to revolutionize robotics by bringing powerful, efficient AI processing directly to the robot. This enables:

- Enhanced Autonomy: Robots can make more complex decisions in real-time based on sensor data and environmental understanding without relying on external servers.

- Improved Interaction: VLMs allow robots to better perceive and interpret their surroundings, leading to more natural and effective human-robot collaboration.

- Edge Computing Efficiency: The low power draw makes advanced AI feasible on battery-powered mobile robots, extending their operational range and utility.

- Cost-Effectiveness: For its capabilities, the $3,000 price point offers a compelling value proposition compared to building equivalent onboard processing systems from scratch.

Availability and Future Outlook

The Jetson Thor developer kit is available now. While Nvidia has other powerful platforms like the DGX Spark (formerly Project Digits), the Jetson Thor is specifically tailored for the power and integration constraints of robotics. The core bottleneck for both remains the memory bandwidth, but the Jetson Thor’s design and low power consumption make it an ideal solution for embedding advanced AI into the next generation of robots.

Source: Testing VLMs and LLMs for robotics w/ the Jetson Thor devkit (YouTube)