Nvidia Introduces DLSS 5, Blurring Lines Between Reality and Gaming

Nvidia has unveiled DLSS 5, a groundbreaking technology that uses artificial intelligence to fundamentally alter how video games look. Announced at GTC 2026, Nvidia CEO Jensen Huang called it the “GPT moment for graphics.” This new AI model goes beyond simply improving performance; it reimagines lighting and materials to create photorealistic visuals, marking a significant departure from previous DLSS versions.

How DLSS 5 Reimagines Graphics

Unlike earlier DLSS versions, which focused on upscaling lower-resolution images (Super Resolution) or creating extra frames for smoother motion (Frame Generation), DLSS 5 acts as a neural rendering model. It takes the standard frame rendered by a game engine and analyzes its scene semantics. This means the AI understands characters, fabrics, hair, and even skin translucency. It also reads environmental lighting, whether a scene is front-lit, back-lit, or overcast.

Based on this understanding, DLSS 5 generates a new version of the frame. It aims to create photorealistic lighting and material appearances that mimic real-world effects. Think of complex lighting interactions, like light scattering through skin or individual hair strands catching the light. These are effects that even advanced ray tracing struggles to achieve in real-time due to high computational demands. DLSS 5 attempts to infer these details using its AI model, overlaying a neural rendering pass onto the existing game engine output without changing the core geometry or textures.

The Technology Behind the Visuals

The DLSS 5 process begins with the game engine rendering a frame, capturing the scene’s geometry, textures, and lighting. It then outputs the visible image (color buffer) and motion data (motion vectors) that track pixel movement between frames. DLSS 5 uses both of these as input. A neural rendering model, trained extensively on Nvidia’s supercomputers, analyzes this data. Nvidia emphasizes that the model doesn’t just look at pixels; it understands the entire scene’s context.

The result is an enhanced frame where lighting and materials are rendered with a new level of realism. This technology aims to simulate complex light behaviors that are computationally expensive for traditional rendering methods. The output is anchored to the original 3D content, ensuring the scene’s structure and motion remain intact.

Hardware Requirements and Initial Concerns

Nvidia demonstrated DLSS 5 at GTC 2026 using two high-end RTX 5090 graphics cards. One card rendered the game, while the second was dedicated solely to running the DLSS 5 neural model. Each RTX 5090 costs around $2,000, meaning the initial setup required approximately $4,000 worth of hardware. Nvidia plans to optimize the technology to run on a single GPU by its full launch.

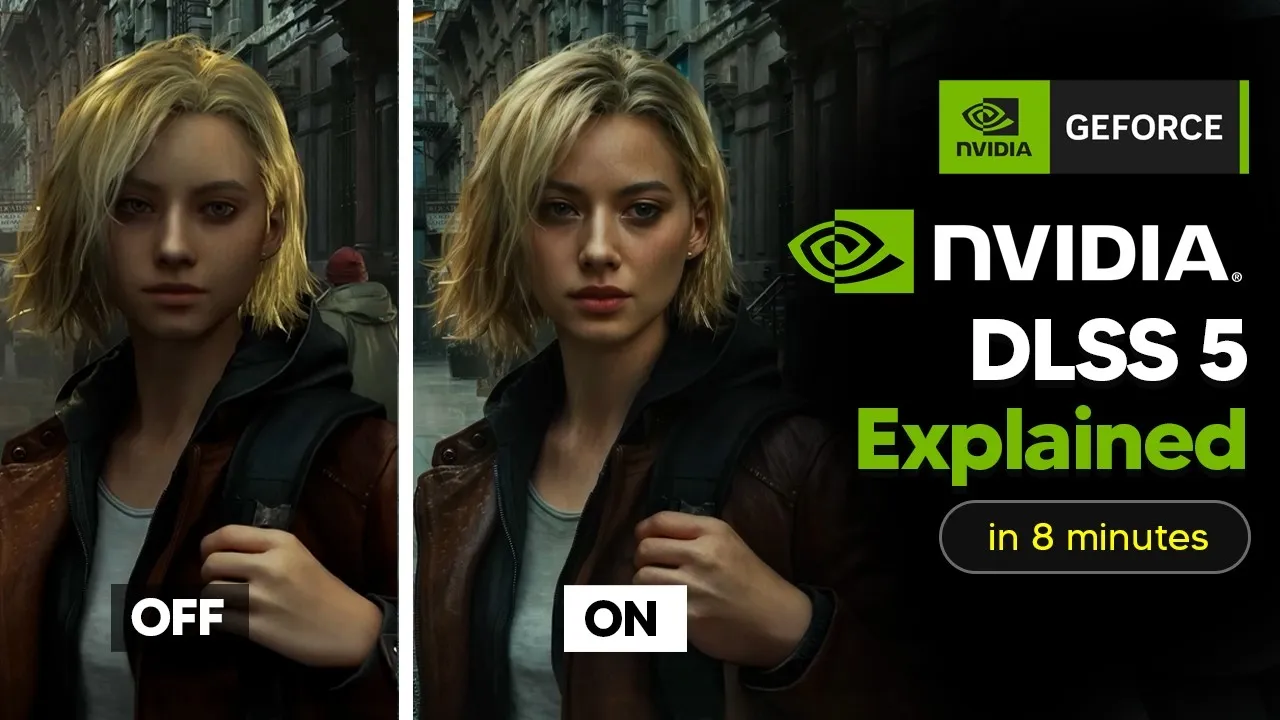

However, the technology immediately faced backlash. After a demo of Resident Evil Aquam, viewers noticed changes in character appearances, like fuller lips and sharper cheekbones. Critics, like PC gamer Tyler Wilde, suggested that the AI model was structurally altering facial features, leading to accusations of an “AI beauty filter” or “Yassification”—a term used when AI tools make subjects appear overly beautified, similar to heavily filtered social media images.

Nvidia’s Response and Developer Control

Nvidia has pushed back against these criticisms, stating that developers retain full artistic control over DLSS 5 implementation. They can use intensity sliders, color grading, and regional masking through the Streamline framework to precisely manage how and where DLSS 5 effects are applied. Bethesda confirmed that DLSS 5 integration in Starfield and Oblivion Remastered is entirely artist-controlled.

Crucially, DLSS 5 is designed to be togglable, meaning players can simply turn it off if they dislike the results. However, concerns remain. Digital Foundry pointed out that since DLSS 5 uses the same Streamline framework as other DLSS versions, modders might force the technology onto games not designed for it, potentially bypassing official developer controls.

The Broader Industry Shift

DLSS 5 is presented as part of a larger industry trend towards neural rendering. Nvidia CEO Jensen Huang declared, “The future is neural rendering” at CES 2026. Google’s DeepMind has also showcased Gen 3, a world model capable of generating interactive 3D environments from text prompts in real-time. DLSS 5 is seen as a hybrid approach, combining traditional rendering with generative AI, sitting at a midpoint in this evolving landscape.

The gaming industry appears to be embracing this shift, with major publishers like Bethesda, Capcom, Ubisoft, Tencent, and Warner Bros. Games on board. Over a dozen titles, including Assassin’s Creed Shadows, Phantom Blade, Resident Evil, and Starfield, are slated for DLSS 5 support at launch this fall. These are major releases from leading publishers, indicating the technology’s significant potential.

Why This Matters

The introduction of DLSS 5 comes at a contentious time, with ongoing debates about generative AI’s role in creative industries. Artists are actively fighting against AI-generated content in various forums. In this climate, Nvidia’s announcement of a technology that alters character appearances and is dubbed a “GPT moment” has sparked significant discussion. Despite the controversy, hands-on reviews suggest the technology is impressive, with PC Mag calling it “the most lifelike gaming graphics I’ve ever encountered.” Critics acknowledge legitimate leaps in environmental lighting and material rendering.

The core debate now centers not on whether neural rendering works—it clearly does—but on who controls the final visual output: the developer, the player, or the AI itself. DLSS 5 represents a powerful new tool that could redefine visual fidelity in gaming, but its implementation and impact will be closely watched.

Source: DLSS 5 Explained Clearly In 8 Minutes (How It Actually Works) (YouTube)