The Million-Dollar Mystery: P vs NP and the Future of Computation

Computer science’s most profound unresolved question, the P versus NP problem, stands as a monumental challenge with a $1 million prize offered by the Clay Mathematics Institute for its solution. At its core, the problem probes a fundamental aspect of computation: if a solution to a problem can be *verified* quickly, can that solution also be *found* quickly? This seemingly abstract question has profound implications for cryptography, artificial intelligence, and our understanding of the universe itself.

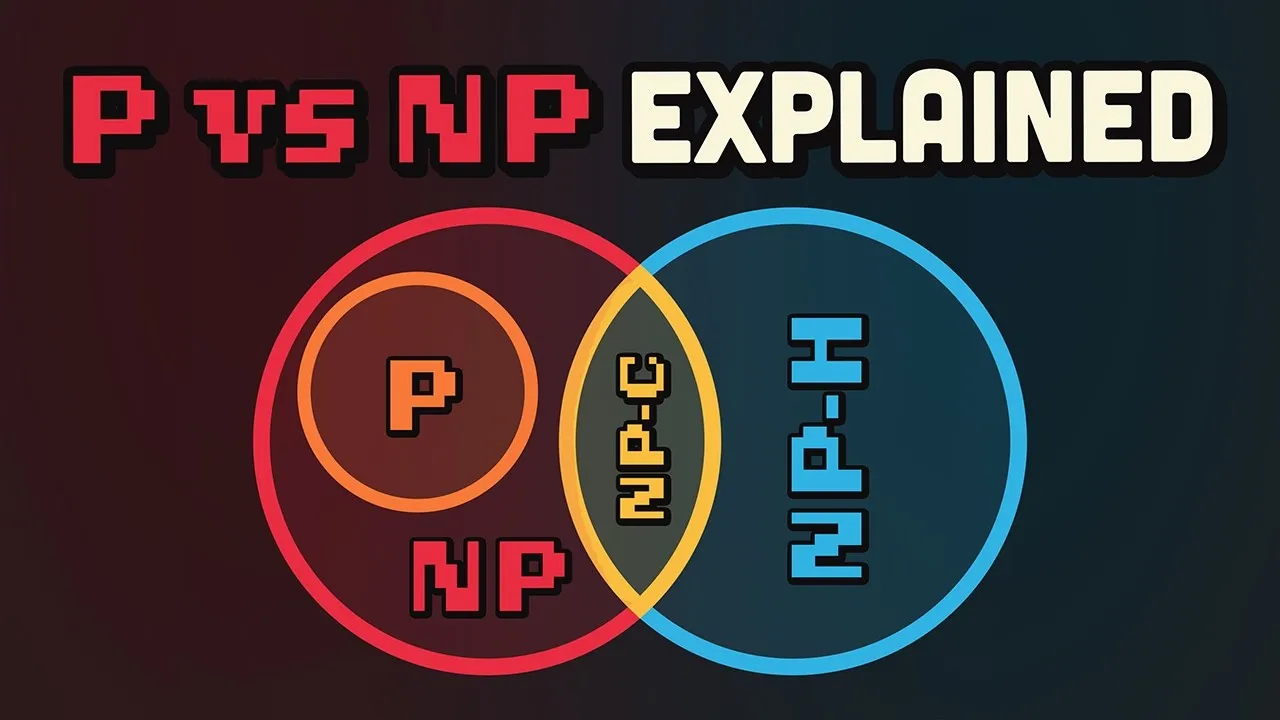

Understanding the Alphabet Soup: P and NP

To grasp the P vs NP debate, we must first understand what ‘P’ and ‘NP’ represent in the realm of computational complexity. These terms, defined formally by Steven Cook in 1971, categorize problems based on the time it takes for a computer to solve them.

Polynomial Time (P)

Problems categorized under ‘P’ are those that can be solved efficiently by a computer. The time it takes to solve them grows at a predictable, polynomial rate relative to the size of the input. A common analogy is sorting a list of names alphabetically. As the list doubles in size, the time to sort it increases, but in a manageable, deterministic way, often proportional to ‘n’ (the number of items) or ‘n log n’. These are the problems computers handle with relative ease, even as they scale.

Non-deterministic Polynomial Time (NP)

The category ‘NP’ encompasses problems where a given solution can be *verified* in polynomial time. However, *finding* that solution from scratch can be exponentially more difficult. Imagine being given a Sudoku puzzle. If someone provides you with a completed grid, you can quickly check if it’s a valid solution. But if you have to solve the puzzle yourself, it might take a considerable amount of time, especially for complex puzzles.

Other classic examples of NP problems include:

- The Traveling Salesman Problem: Finding the shortest possible route that visits a given set of cities exactly once and returns to the origin. Verifying a proposed route’s length is easy, but finding the absolute shortest route is incredibly hard for many cities.

- Prime Factorization: Given a large number, finding its prime factors. Multiplying two large prime numbers is a simple task (P), but factoring a very large number back into its prime components is computationally intensive and forms the basis of much modern encryption (suggesting NP).

The NP-Complete Conundrum

Within the NP category lies a special subset known as ‘NP-complete’ problems. These are considered the hardest problems in NP. The critical characteristic of NP-complete problems is that if a polynomial-time algorithm can be found to solve *any single* NP-complete problem, then it can be used to solve *all* NP-complete problems in polynomial time.

The first problem proven to be NP-complete was the Boolean Satisfiability Problem (SAT). SAT asks whether there exists an assignment of true or false values to variables in a complex logical expression that makes the entire expression true. Checking a proposed assignment is straightforward, but finding such an assignment for a large expression is extremely challenging.

Many other significant problems, including protein folding, circuit design, and complex scheduling tasks, fall into the NP-complete class. The difficulty in solving these problems forces real-world applications to often rely on ‘heuristics’ – approximation algorithms that provide good, but not necessarily optimal, solutions within a reasonable timeframe, sacrificing perfection for practicality.

The Stakes: P = NP vs. P != NP

The core of the P vs NP problem is the question: Does P equal NP? In simpler terms, if a solution can be checked quickly, can it also be found quickly?

If P = NP

The implications of proving P = NP would be revolutionary and potentially chaotic. Every problem currently considered computationally intractable would become efficiently solvable. This could lead to:

- Breaking Cryptography: All current encryption methods, including those protecting financial transactions, secure communications, and cryptocurrency wallets, would become instantly vulnerable.

- Solving Grand Challenges: Problems like protein folding, complex optimization, and advanced logistics could be solved with unprecedented speed, potentially leading to breakthroughs in medicine (like curing diseases) and efficient resource management.

- Total System Overhaul: The digital world would need a complete re-architecture to adapt to this new computational landscape.

If P != NP

Conversely, if it’s proven that P does not equal NP, it would confirm our current understanding that some problems are inherently harder to solve than to verify. This would suggest:

- The Limits of Computation: The universe, or the systems within it, has fundamental computational limits.

- Enduring Cryptography: Current encryption methods would remain secure, assuming their underlying mathematical assumptions hold.

- Philosophical Implications: Some theories suggest this outcome might imply we live in a simulation designed to conserve computational power, or that certain aspects of reality are fundamentally complex and cannot be shortcut.

Why This Matters

The P vs NP problem isn’t just an academic exercise; it underpins the security of our digital infrastructure and the feasibility of solving some of humanity’s most pressing challenges. For decades, computer scientists and mathematicians have grappled with this question, with no definitive proof emerging. The $1 million prize from the Clay Mathematics Institute underscores the profound importance and difficulty of finding a solution. Until a proof is found, the world operates on the assumption that P != NP, a reality that has enabled secure online interactions but also means many complex problems remain stubbornly difficult to solve efficiently.

While the P vs NP problem remains unsolved, the pursuit of computational efficiency continues. Companies are constantly developing new algorithms and infrastructure to tackle complex problems, especially in the burgeoning field of AI. For instance, platforms like MongoDB Atlas are addressing the backend infrastructure challenges faced by AI developers, allowing them to store and query diverse data types, including vector embeddings for AI applications, in a unified way. This innovation aims to streamline the development process, enabling faster deployment of AI features by simplifying complex data management, a crucial step in leveraging computational power for real-world solutions.

Source: The greatest unsolved problem in computer science… (YouTube)