Reddit’s Vast Comment Archive: A Treasure Trove for AI Development

The sprawling universe of Reddit, with its billions of comments spanning over a decade, presents an unparalleled resource for training and fine-tuning large language models (LLMs). While often overlooked in favor of more structured datasets, Reddit’s raw, conversational data offers a unique window into human interaction, diverse opinions, and evolving language. This article delves into the intricate process of harnessing this data, from acquisition and cleaning to its potential for creating more nuanced and realistic AI conversational agents.

The Challenge of Data Curation

The most significant hurdle in leveraging Reddit data for AI is not the model training itself, but the meticulous curation of the dataset. Unlike smaller, hand-crafted datasets, which allow for precise control, working with Reddit’s scale requires robust strategies for filtering, formatting, and processing. The goal is to transform terabytes of raw comments into a structured format suitable for LLM fine-tuning.

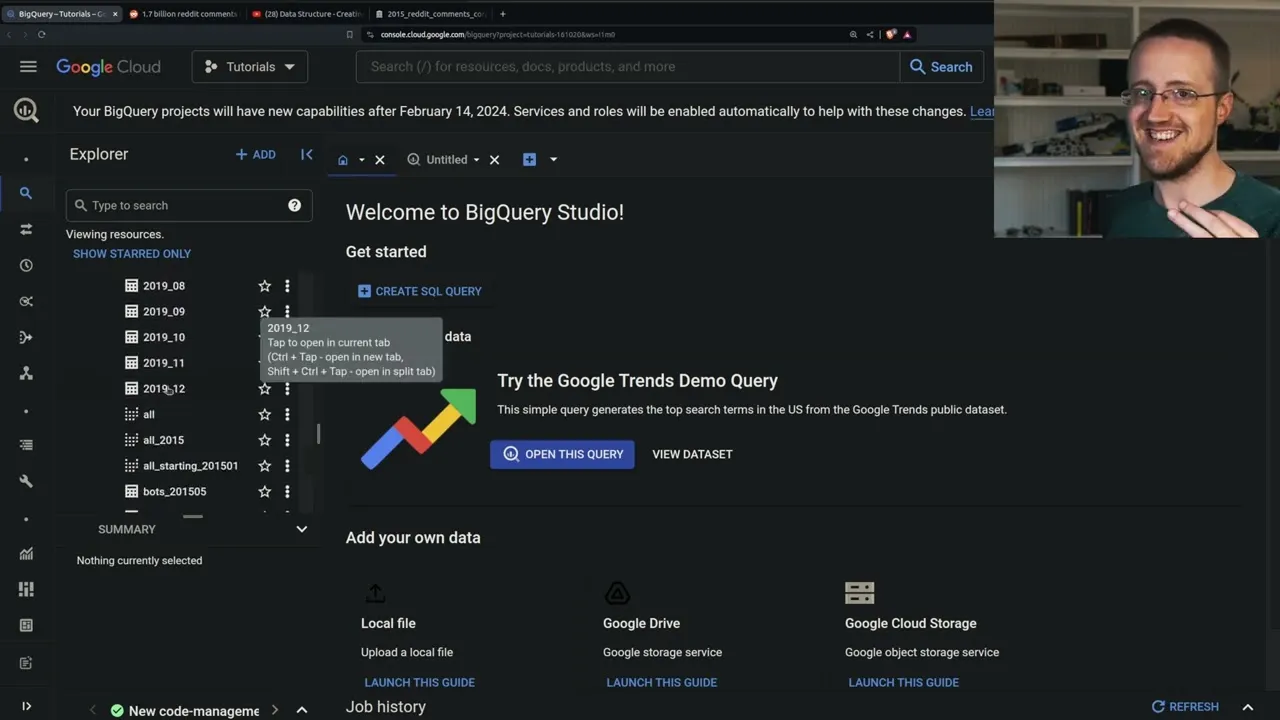

Acquiring the Data: From Torrents to BigQuery

Historically, accessing comprehensive Reddit data has been challenging. Early efforts involved torrents containing comments up to a certain point, like 2015. However, these are often outdated. A more accessible and extensive source is Google BigQuery, which hosts a remarkably large collection of Reddit comments. This dataset, maintained by FH BigQuery, spans from 2005 up to the end of 2019, offering billions of comments across various subreddits. While this data is sufficient to train a foundational LLM from scratch, it’s particularly valuable for fine-tuning existing models on specific conversational styles or topics.

Navigating BigQuery Exports

Exporting data from BigQuery, especially at this scale, presents its own set of technicalities. The process typically involves exporting data to Google Cloud Storage (GCS). Users can choose between formats like CSV or JSON, with options for compression. The author notes that while CSV might seem straightforward, its effectiveness diminishes when dealing with large bodies of text, making JSON a more manageable format for programmatic parsing. The export process itself can be resource-intensive, often requiring overnight downloads due to internet bandwidth limitations. The Google Cloud interface, even for experienced users, can be laggy and complex, adding to the challenge.

Decompression and Preprocessing

Once data is downloaded, often in compressed formats like .gzip, the next step is decompression. This is a critical preprocessing phase. While decompression can be done on-the-fly during data loading, performing it as a separate, one-time step is often more efficient. This is because the decompression process is CPU-intensive and performing it once ensures that subsequent iterations over the data are faster. The author experimented with various methods, including simple Python scripts and leveraging multiprocessing, to accelerate the decompression of thousands of files. Challenges arose with file naming conventions and ensuring the correct decompression algorithms were applied. Ultimately, performing decompression directly on the Network Attached Storage (NAS) where the data resided proved to be the most efficient method, likely due to reduced network transfer overhead and the NAS’s processing capabilities.

Structuring for Conversational AI

A key innovation discussed is moving beyond simple paired conversations (e.g., user-bot, instruction-response) to model more complex, multi-participant dialogues. Reddit conversations often involve numerous users contributing to a single thread. The proposed approach involves dynamically assigning unique IDs to each speaker within a conversation, including a designated ID for the bot. This allows the model to learn from interactions involving multiple entities, mirroring real-world conversational dynamics more accurately. This is crucial for developing LLMs that can generate more realistic and contextually aware responses in diverse social settings.

From Raw Data to Usable Features

After decompression and initial structuring, the data needs further refinement. This involves selecting relevant columns for training, such as the comment body, author, and timestamps. Columns like subreddit IDs or link IDs might be discarded to save memory and computational resources, especially when dealing with petabytes of data. The author also highlights the importance of filtering comments that lack replies, as the focus is on conversational turns. The goal is to create a dataset that captures the flow of dialogue, with each subsequent reply potentially forming a new training sample that includes the historical context.

The Fine-Tuning Experiment

The ultimate aim is to use this curated dataset to fine-tune LLMs. The author mentions a previous attempt to fine-tune a model, possibly Llama 2, on Wall Street Bets data, which suffered from insufficient data quantity and quality. The current effort aims to build a more robust dataset, potentially around 35,000 samples, from the broader Reddit comment archive, specifically targeting the conversational style of subreddits like Wall Street Bets. The potential for creating a fine-tuned model that better reflects the unique linguistic patterns and conversational dynamics of specific online communities is significant.

Why This Matters

The ability to effectively process and utilize massive, unstructured datasets like Reddit’s comment archive is fundamental to advancing AI. By developing sophisticated methods for data acquisition, cleaning, and structuring, researchers and developers can:

- Improve Conversational AI: Create chatbots and virtual assistants that engage in more natural, multi-turn dialogues, understanding complex social interactions.

- Enhance Understanding of Online Discourse: Analyze trends, sentiments, and linguistic evolution across vast online communities.

- Democratize Data Access: Make large-scale datasets more accessible and usable for a wider range of AI projects, from academic research to commercial applications.

- Develop Specialized Models: Fine-tune LLMs to mimic specific writing styles, tones, or knowledge domains found within niche online communities.

The ongoing work with Reddit data underscores the critical role of data engineering in the AI revolution. As models become more powerful, the quality and structure of the data they learn from will increasingly determine their real-world effectiveness and applicability.

Giveaway Announcement

As a special incentive, viewers are encouraged to sign up for Nvidia’s GPU Technology Conference (GTC) using the provided link. Signing up makes participants eligible for a chance to win an RTX 4080 Super. Attending at least one session is required for eligibility. GTC offers a wealth of sessions on cutting-edge AI and technology, including topics like video generation and the intersection of robotics and generative AI.

Source: Building an LLM fine-tuning Dataset (YouTube)