NVIDIA Unlocks Robot Learning Breakthrough

Scientists have developed a new way for robots to learn how to interact with the world, making them much smarter and more capable. This advancement comes from NVIDIA and aims to solve a long-standing problem in robotics: getting AI models that work well in computer simulations to perform reliably in the real world.

For years, researchers have trained robots in simulated environments, like video games, because it’s safer and faster than experimenting with physical robots. However, these simulations often don’t perfectly match reality. When robots trained in these digital worlds are put into real-world situations, they frequently fail in unexpected ways.

Bridging the Simulation Gap

The core challenge is that simulations, while helpful, are not the same as actual physical reality. They might mimic how things work but cannot fully replicate the complexities of the real world. This often leads to disappointment when a robot’s simulated skills don’t translate to physical tasks.

Previous attempts to improve this involved feeding AI vast amounts of video data showing humans performing actions. While this sounds promising, it proved largely ineffective.

Human and robot bodies are very different, with unique hands and joints. The videos also lacked crucial information about forces and joint movements, making the data hard for AI to use effectively.

Dreamer AI: Four Genius Ideas

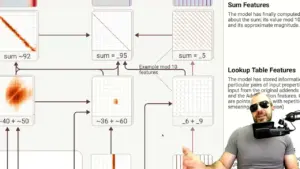

To overcome these limitations, researchers introduced a new approach, dubbed Dreamer AI, built on four key ideas. First, if videos lack specific labels about actions, the AI should be encouraged to figure out what’s happening on its own. It learns to infer actions and understand events, much like a person understands that waving at a departing bus means they missed it, even without a label.

Second, the system uses an incredibly large dataset, containing billions of video frames. To handle this massive amount of information, the AI is forced to compress data and focus only on the most important details. This process is similar to how musicians learn the fundamental notes of music to understand and create countless songs.

Third, the AI is trained to understand actions in a relative, not absolute, way. Instead of learning to pick up a cup at a specific spot in the world, it learns to perform actions relative to objects. This means if the cup is moved slightly, the robot can still understand how to interact with it, just as a chef uses a knife relative to a carrot, not by absolute world coordinates.

Fourth, the goal is for the AI to learn cause and effect. By feeding the AI actions in small, timed blocks, it prevents the AI from simply looking at the end result and guessing what happened. This forces it to truly understand how actions lead to consequences.

Impressive Real-World Results

The results of this new method are striking when compared to older techniques. Robots using the new AI can now perform tasks like crumpling paper realistically, whereas older methods often resulted in hands passing through objects. They can also successfully move objects like lids, which previously would not budge.

These improvements represent a significant leap forward in how robots learn and interact with their physical surroundings. The system’s ability to understand and predict how the world will change is a major step towards more capable robots.

Speeding Up Learning with Distillation

While the initial training of this advanced AI is slow, requiring many steps for each prediction, a technique called ‘distillation’ makes it practical. Distillation involves training a smaller, faster AI model (the ‘student’) to mimic the performance of the larger, slower, but highly accurate model (the ‘teacher’).

This student model can then run much faster, achieving interactive speeds of about 10 frames per second. Crucially, it maintains very similar prediction accuracy to the original, slower model. This makes real-time interaction and learning possible for robots.

Comparison to Other AI Models

This new method differs from previous AI like NeRD (Neural Robot Dynamics), which built detailed 3D environments. Dreamer AI operates in 2D, learning from video pixels. This allows it to understand a vast array of everyday objects, making it more versatile for common tasks.

Accessibility and Future Impact

One of the most exciting aspects of this development is its accessibility. NVIDIA is releasing the code and pre-trained models for free. This means anyone can download and use this advanced AI brain for their own devices without costly subscriptions.

This breakthrough brings us closer to robots that can perform complex tasks like folding laundry, cooking meals, or even assisting surgeons remotely during complex operations. The availability of powerful, free AI tools is accelerating the arrival of these advanced robotic capabilities.

The code and pre-trained models for this new AI are now available for download.

Source: NVIDIA’s New AI: The Biggest Leap In Robot Learning Yet (YouTube)