Anthropic’s Coding Model Hits Compute Wall

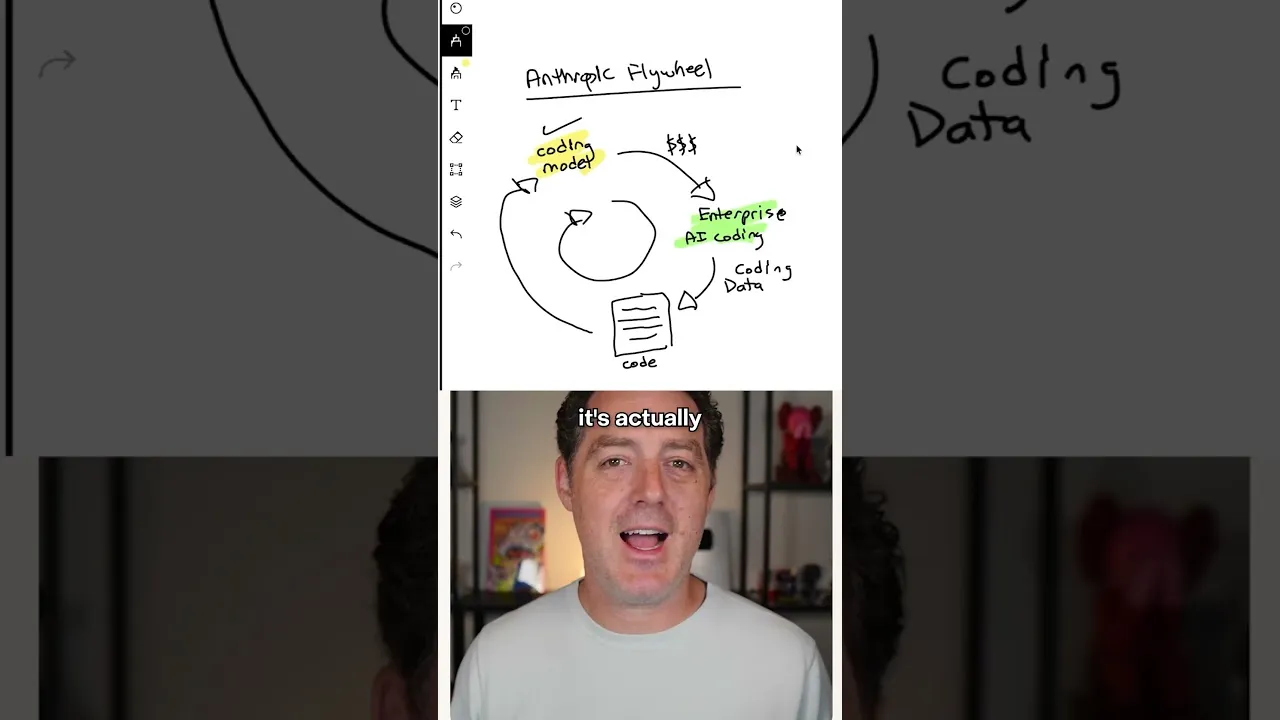

Anthropic, a major player in artificial intelligence, appears to be facing significant challenges with its highly successful coding model. The company built a powerful system that seemed to create a self-reinforcing growth cycle, often called a flywheel in business. This cycle involved using their coding model to generate revenue, which then funded more development and data for even better models.

The core of this impressive system was a focus on AI that helps write computer code. This specialized model was designed to be sold to businesses for their software development needs.

The data generated from using this model, essentially examples of code and how it’s used, then became valuable for training future, more advanced versions of the same AI. This created a loop where every improvement made the next generation of the model even stronger.

The Flywheel Explained

Imagine a machine that gets better the more you use it, and the more it gets better, the more people want to use it. That’s the idea behind Anthropic’s coding AI. When users employed the AI to write code, they provided valuable information.

This information was like fuel, helping Anthropic build a smarter AI the next time around. This process allowed the company to make more money by offering better services.

The money earned from these services was then reinvested into buying more computing power, also known as compute. Compute is essential for training and running complex AI models.

More compute meant Anthropic could handle more users and train their AI faster. This, in turn, made the AI even more capable, attracting more users and generating more revenue, continuing the virtuous cycle.

A Critical Bottleneck Emerges

However, this powerful engine seems to be sputtering. The primary issue appears to be a shortage of the necessary computing power.

Building and running advanced AI models, especially those that learn from vast amounts of data, requires immense computational resources. These resources are often provided by specialized hardware like powerful graphics processing units (GPUs).

Anthropic’s rapid success with its coding model may have outpaced its ability to secure enough compute. This lack of processing power is preventing the company from fully utilizing its data and training its next generation of AI models as quickly as planned. The flywheel, once a source of strength, is now showing signs of breaking down due to this fundamental resource constraint.

Why This Matters

The struggle for compute resources highlights a major challenge in the current AI industry. Companies are racing to develop more powerful AI, but the hardware needed to do so is in high demand and can be very expensive. This shortage affects not just Anthropic but potentially any AI company experiencing rapid growth and needing significant computational power.

For businesses relying on Anthropic’s AI tools, this could mean slower updates or limitations in the model’s capabilities. It also raises questions about the long-term sustainability of AI development models that depend heavily on continuous access to cutting-edge hardware. The availability and cost of compute will be crucial factors in determining which AI companies can maintain their lead.

The situation suggests that Anthropic may need to find new strategies to acquire more compute, perhaps through partnerships or by optimizing their existing infrastructure. The company’s next steps in addressing this compute bottleneck will be closely watched by the industry.

Source: Anthropic is in trouble (YouTube)